ncsim Documentation

| Description | Comprehensive guide to the Networked Compute Simulator |

| Repository | https://github.com/ANRGUSC/ncsim |

| Copyright | Copyright © 2026 Autonomous Networks Research Group (ANRG), University of Southern California |

Table of Contents

ncsim Documentation¶

ncsim (Networked Compute Simulator) is a headless discrete-event simulator for evaluating task scheduling algorithms on heterogeneous networked systems. It models compute nodes, network links with WiFi interference, and DAG task graphs, producing detailed JSONL traces and JSON metrics for analysis.

Developed by the Autonomous Networks Research Group (ANRG) at the University of Southern California.

Key Features¶

- Deterministic simulation -- same inputs plus the same seed produce identical results every time

- HEFT / CPOP / Round Robin scheduling -- integrated with anrg-saga schedulers, plus manual task pinning

- Multi-hop routing -- direct, widest-path (max-min bandwidth), and shortest-path (min-latency) algorithms

- 802.11 WiFi PHY/MAC modeling -- log-distance path loss, SNR-based MCS rate adaptation for 802.11n/ac/ax

- Interference models -- none, proximity, CSMA/CA clique-based, and CSMA/CA Bianchi (dynamic SINR)

- Fair bandwidth sharing -- concurrent transfers on the same link share capacity proportionally

- Web visualization UI -- interactive experiment builder, Gantt charts, animated replay, and network topology views

- Structured output -- JSONL event traces and JSON summary metrics for automated analysis

Guide Roadmap¶

This documentation is organized into eight sections, each covering a different aspect of ncsim:

| # | Section | What You Will Learn |

|---|---|---|

| 1 | Getting Started | Install ncsim, its dependencies, and the optional visualization frontend |

| 2 | Core Concepts | Understand the architecture, simulation model, scheduling, routing, and interference |

| 3 | Scenarios | Write and customize YAML scenario files that define networks, DAGs, and configurations |

| 4 | CLI Usage | Run simulations from the command line, interpret output files, and automate batch experiments |

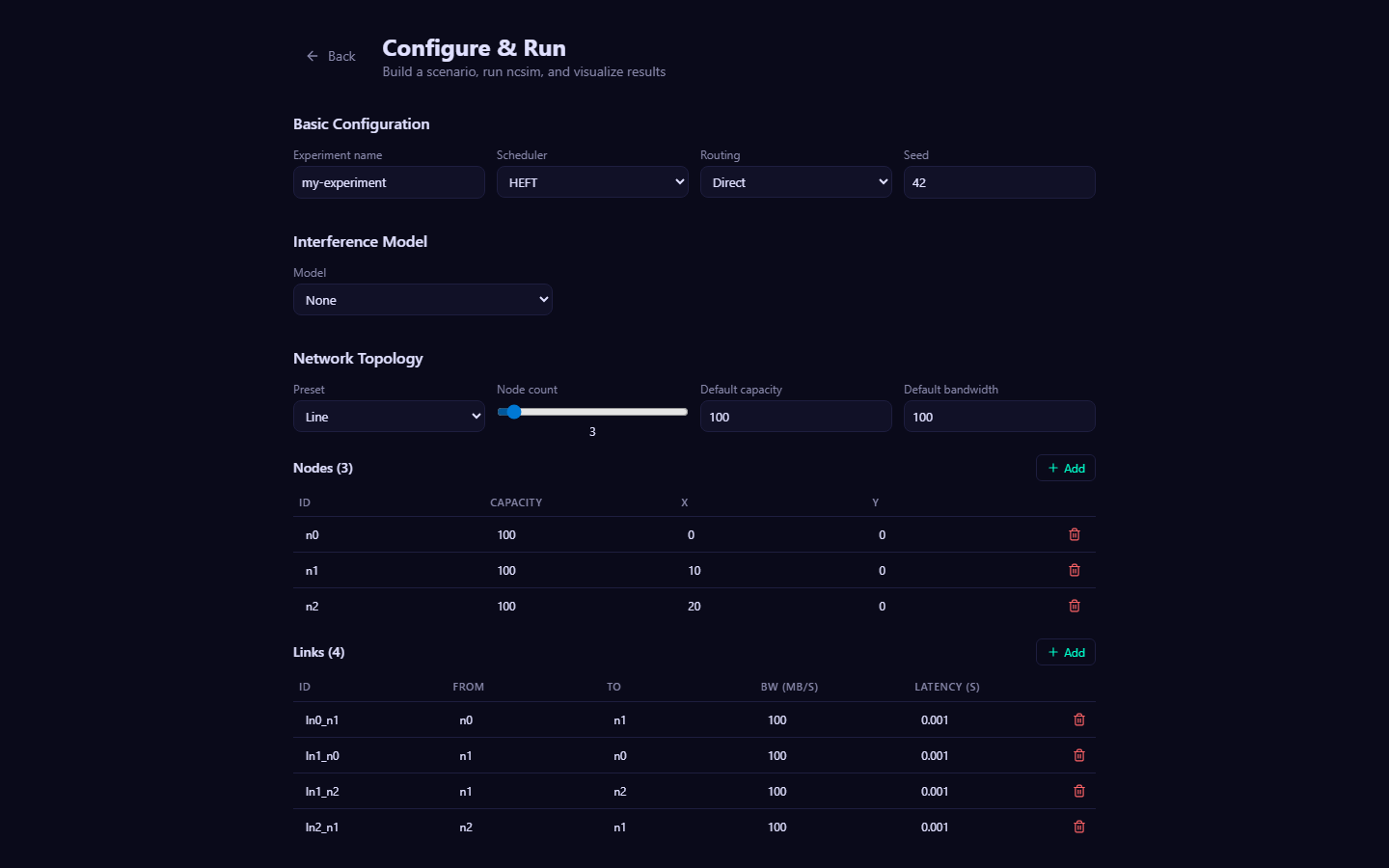

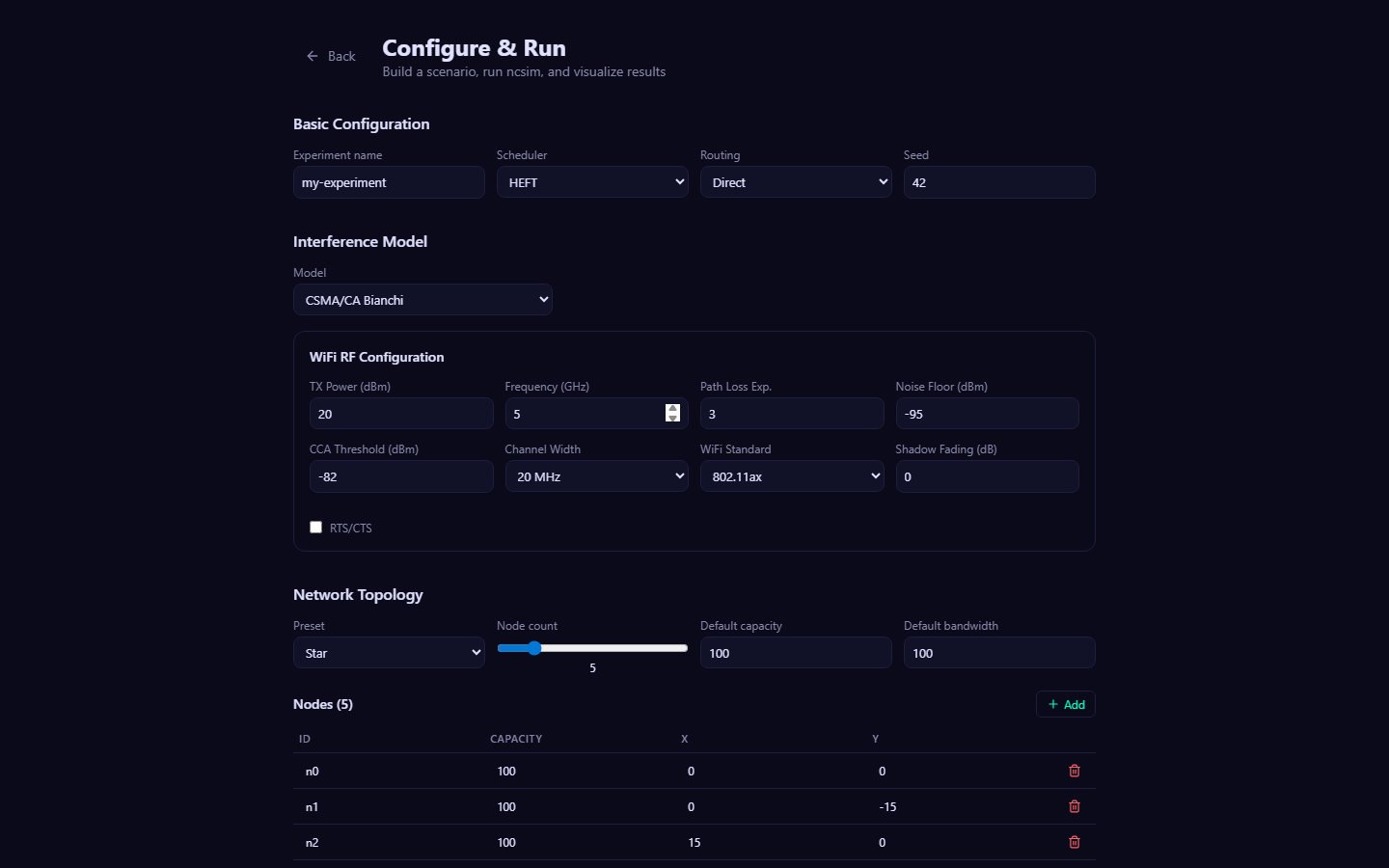

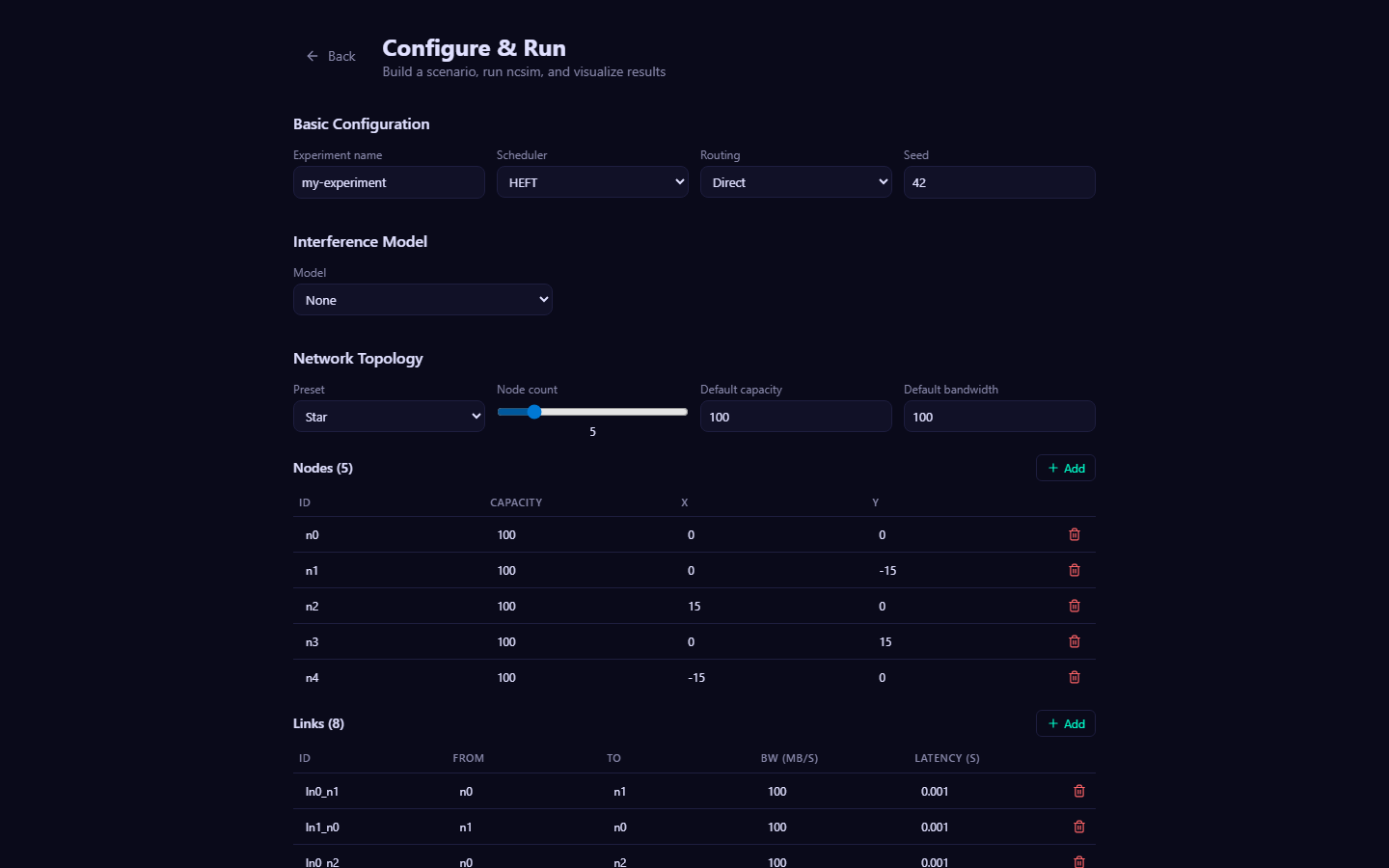

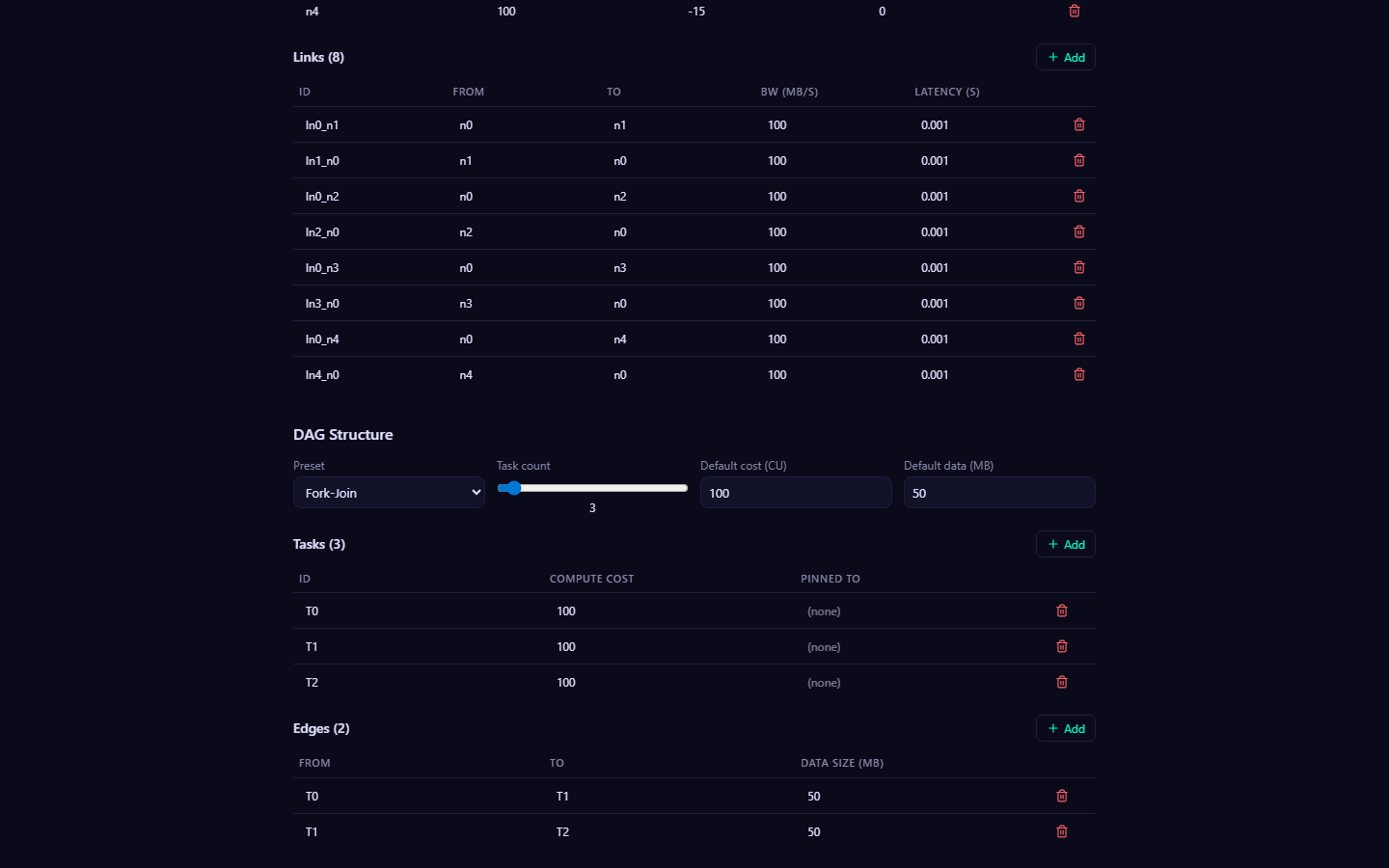

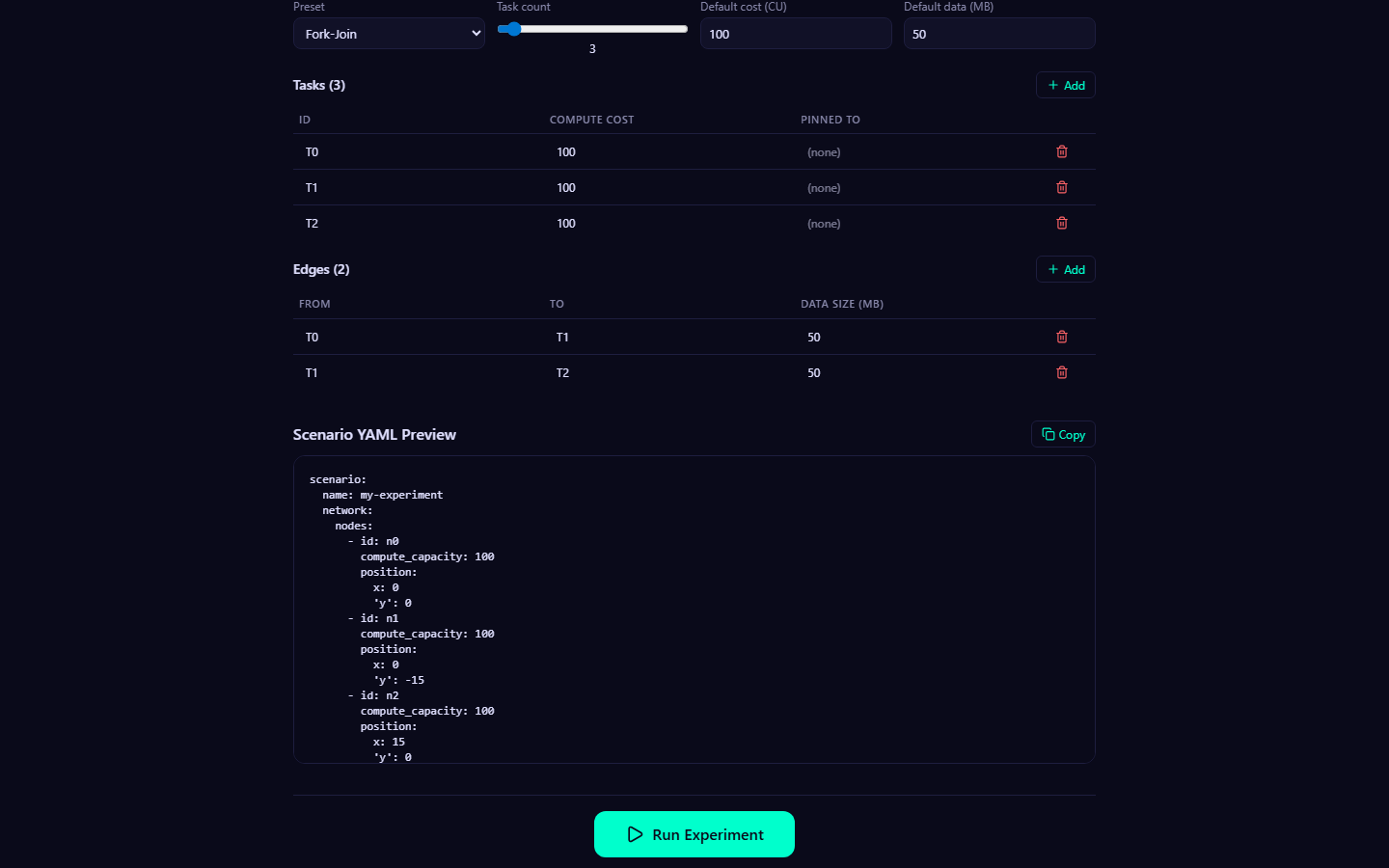

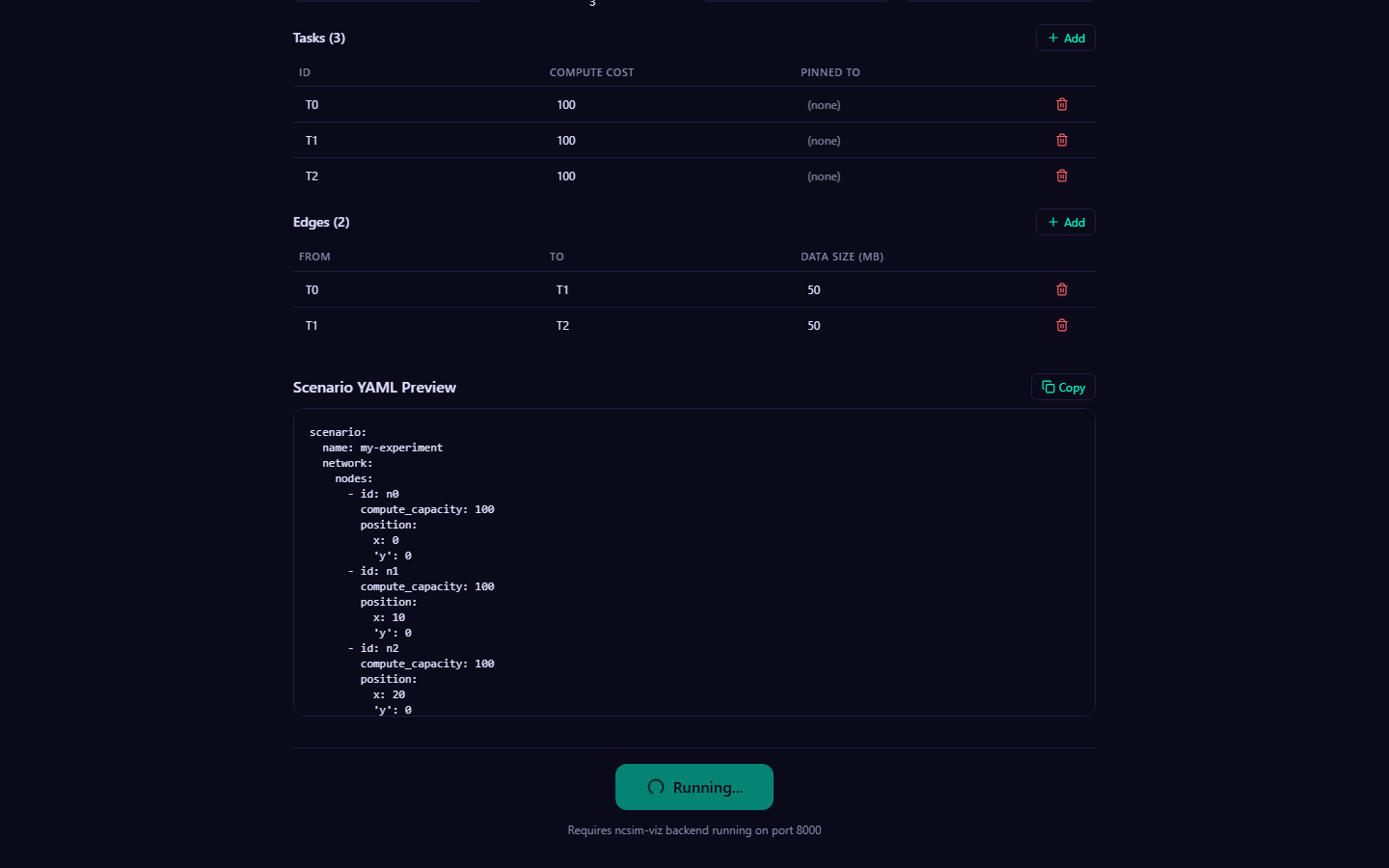

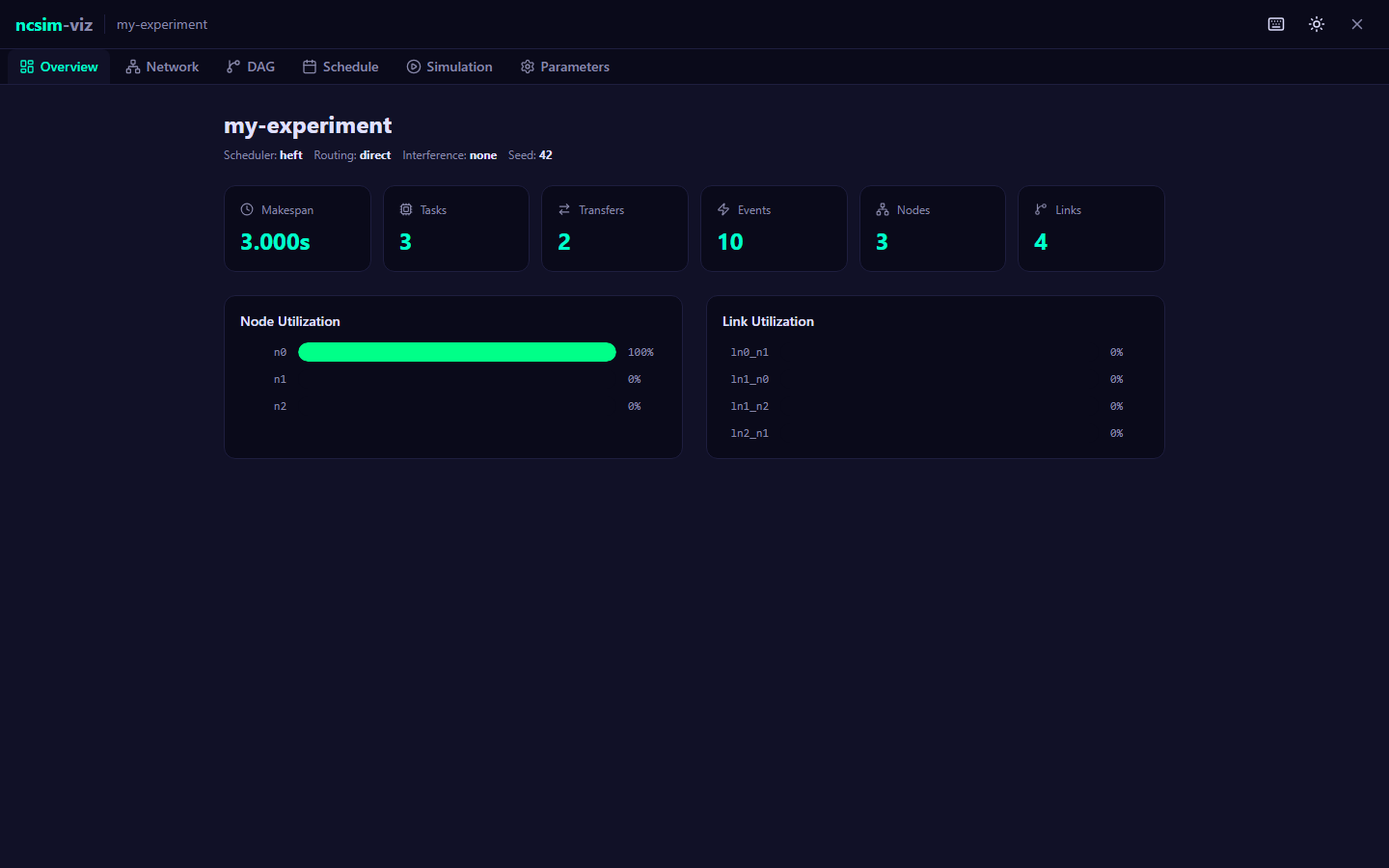

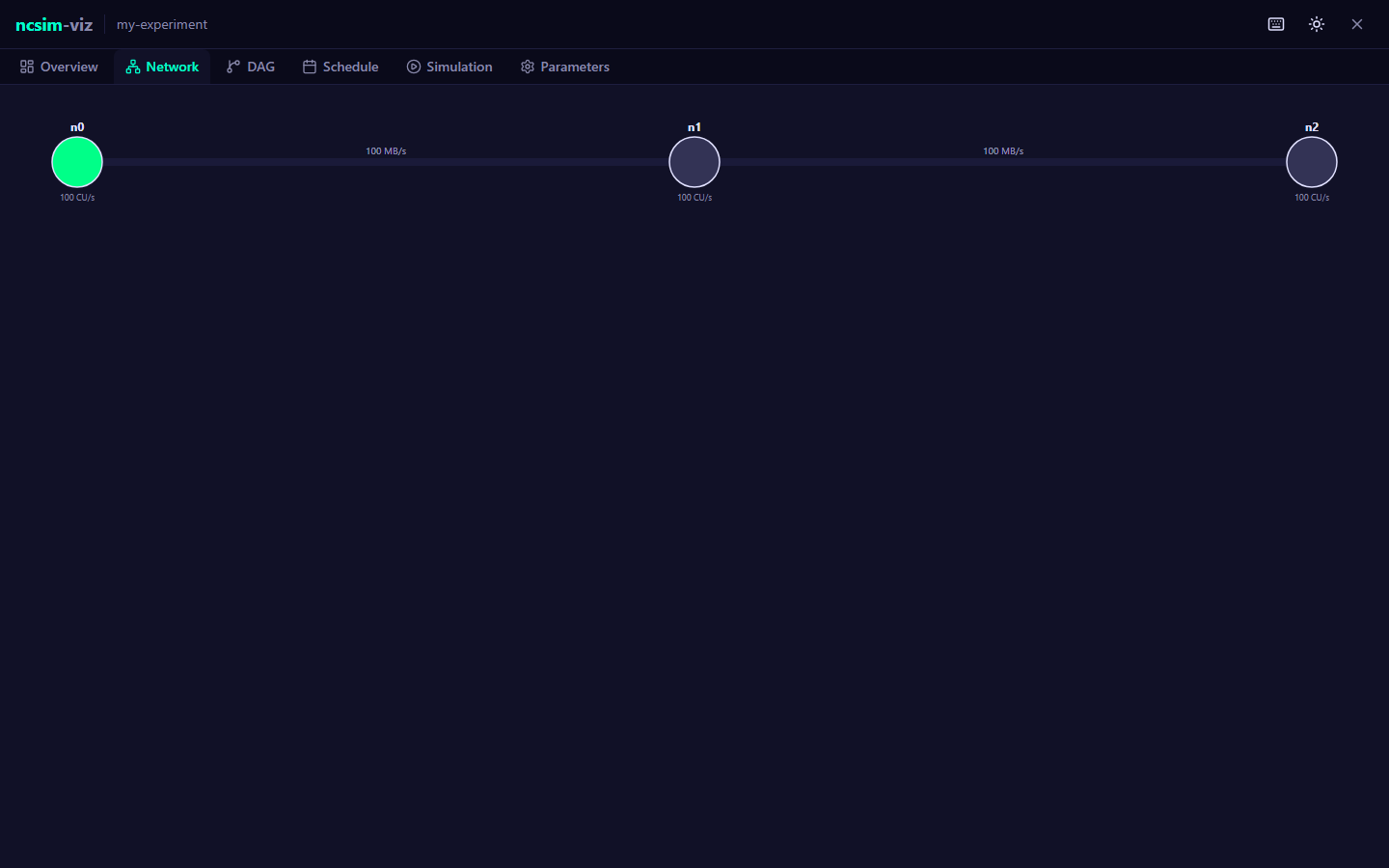

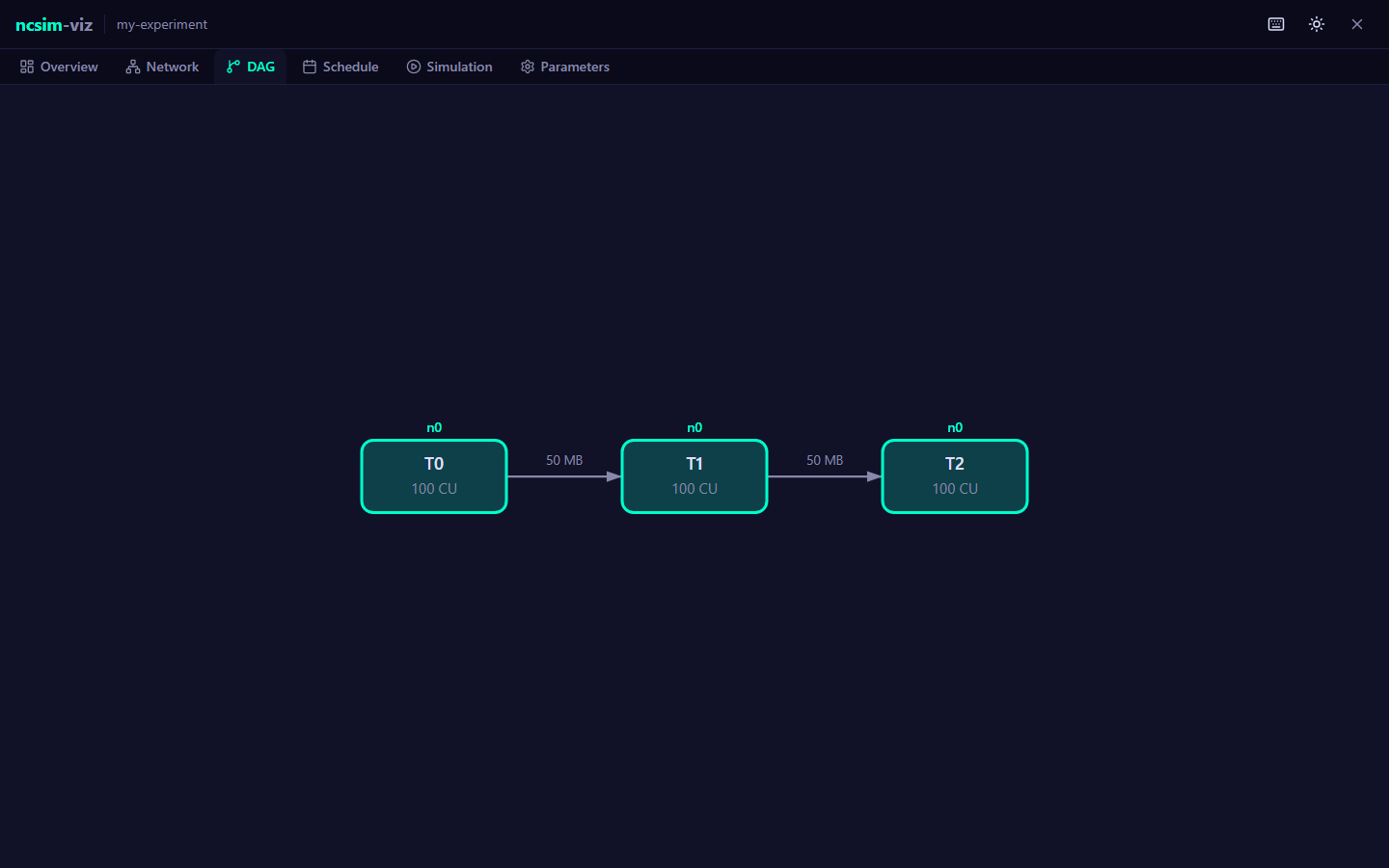

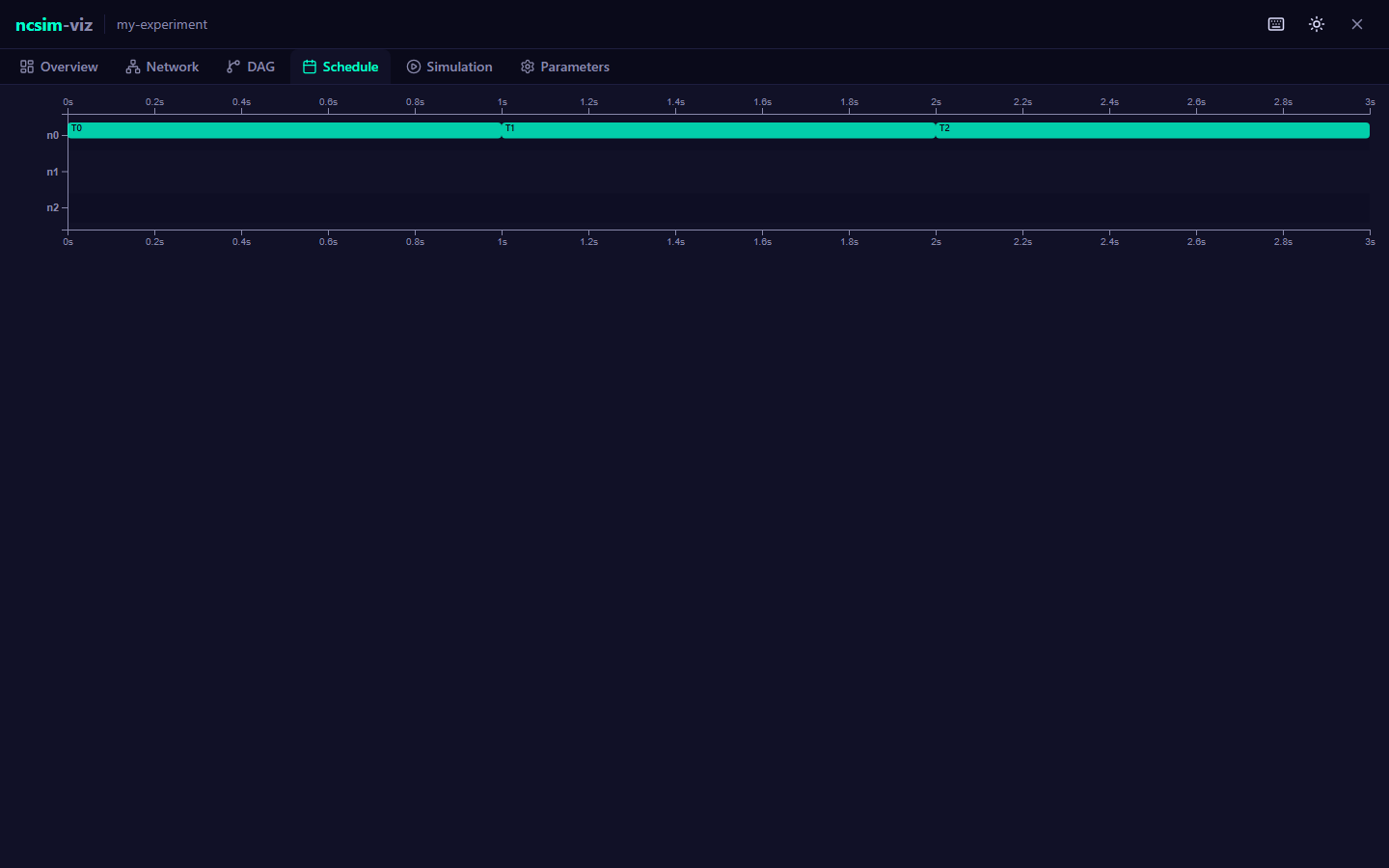

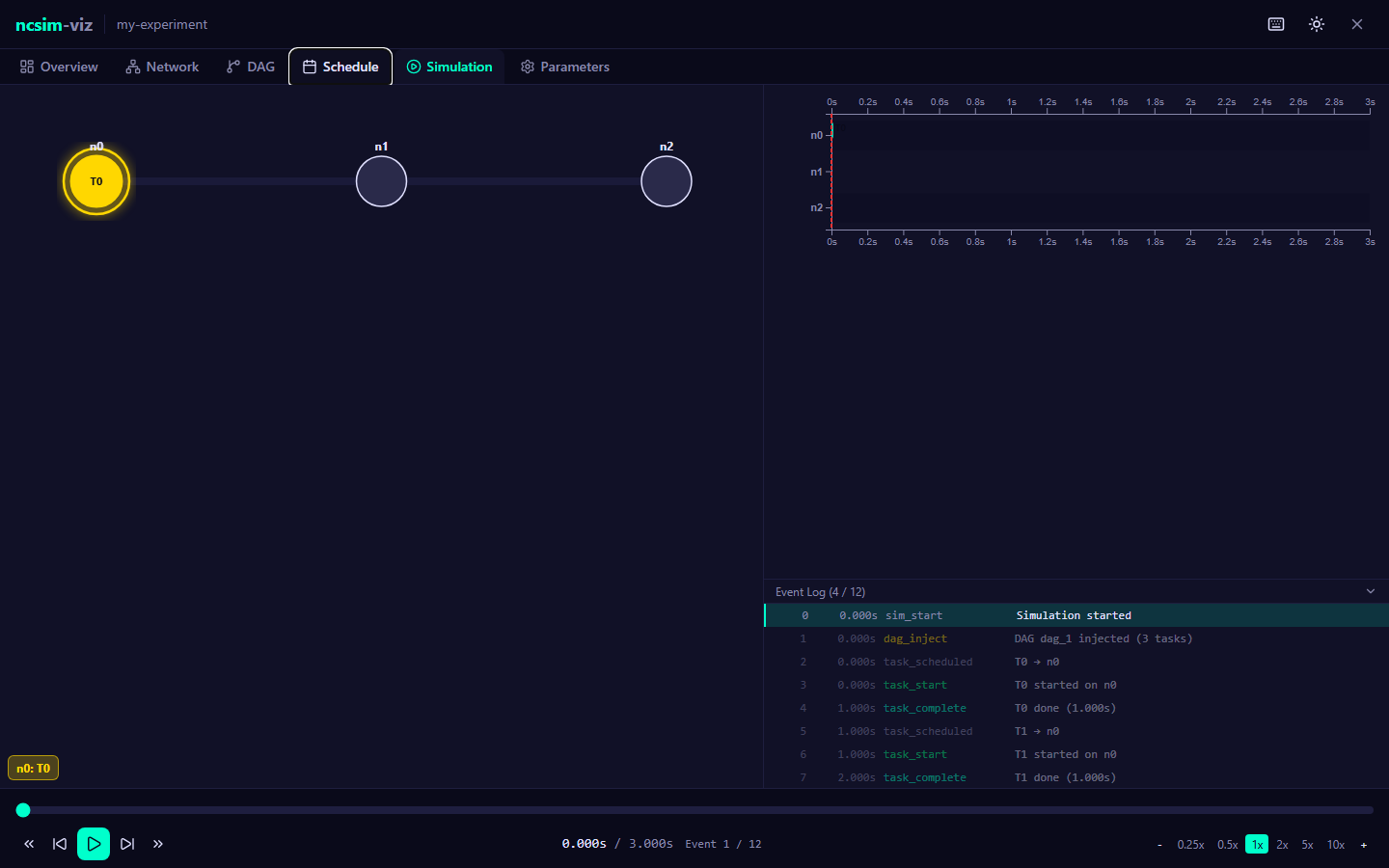

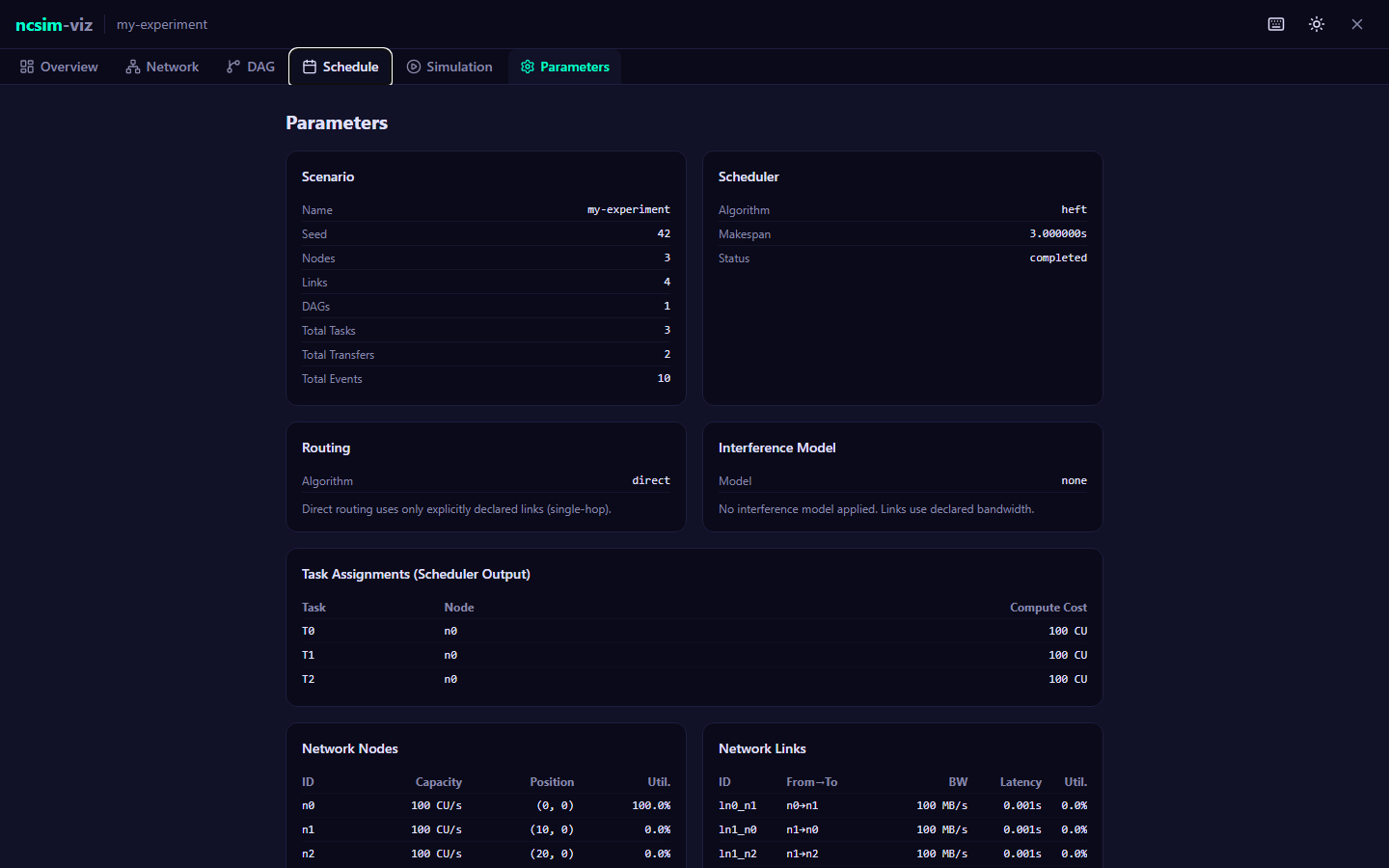

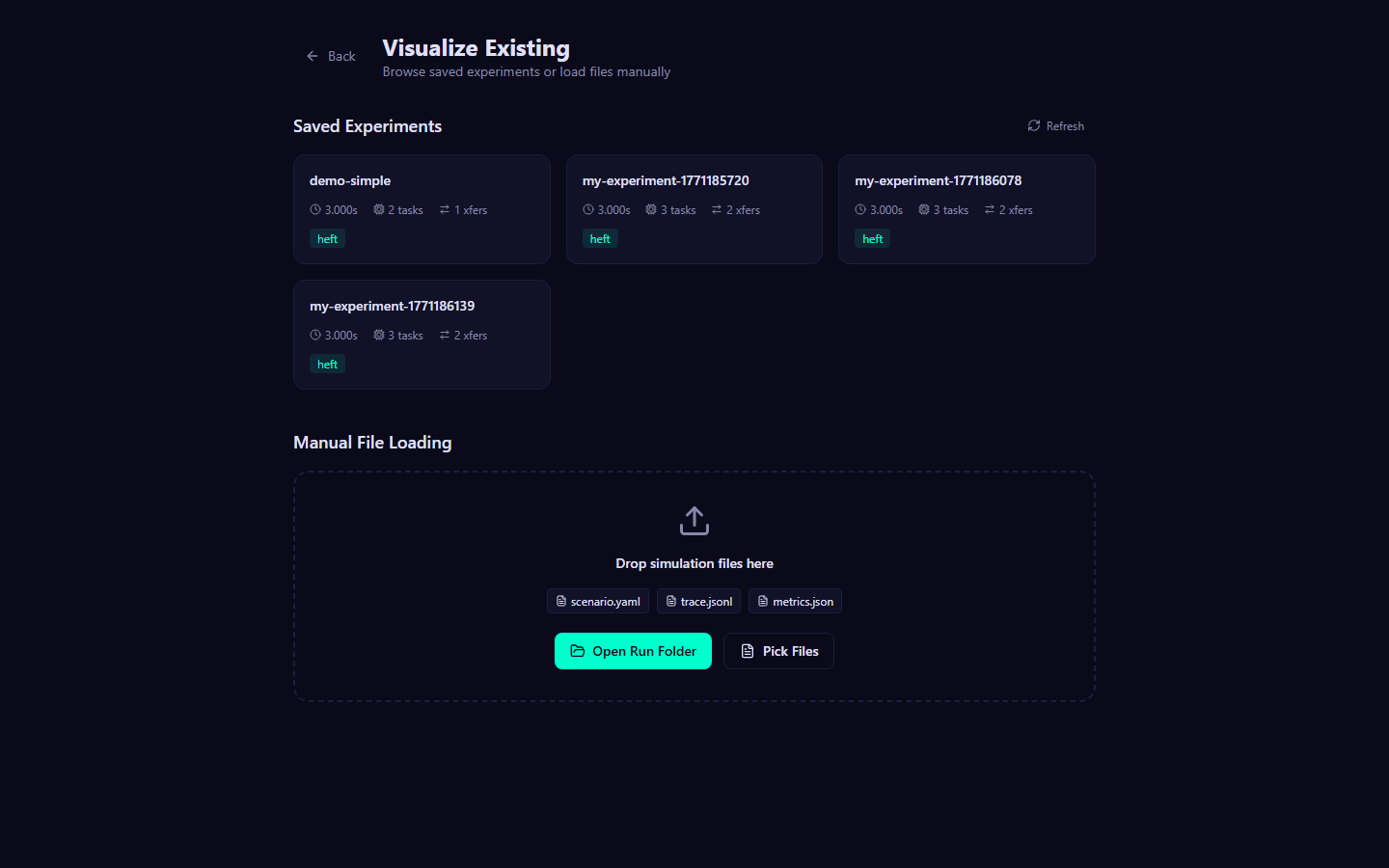

| 5 | Visualization | Set up and use the web UI to configure experiments and explore results interactively |

| 6 | Experiments | Reproduce interference verification and routing comparison experiments |

| 7 | Tutorials | Follow step-by-step walkthroughs from first simulation to advanced WiFi experiments |

| 8 | Reference | Look up FAQs, troubleshooting tips, and the glossary |

New to ncsim?

Start with the Installation guide, then follow the Quick Start to run your first simulation in under five minutes.

Quick Links¶

-

Installation

Set up Python, clone the repository, and install all dependencies.

-

Quick Start

Run your first simulation and examine the output in five minutes.

-

Scenario YAML Reference

Full specification of nodes, links, DAGs, tasks, and config options.

-

CLI Reference

All command-line flags, overrides, and usage examples.

-

Visualization

Interactive web UI for building scenarios and exploring results.

-

Tutorials

Guided walkthroughs from basic to advanced usage.

How It Works¶

At a high level, ncsim follows this pipeline:

graph LR

A[Scenario YAML] --> B[Scenario Loader]

B --> C[Scheduler<br/>HEFT / CPOP / RR]

C --> D[Simulation Engine]

D --> E[Trace JSONL]

D --> F[Metrics JSON]

F --> G[Viz UI / Analysis]

E --> G- You define a scenario in YAML: network topology, node capacities, link bandwidths, DAG task graphs, and configuration (scheduler, routing, interference model, seed).

- The scheduler (powered by anrg-saga) assigns tasks to nodes.

- The simulation engine executes the schedule as a discrete-event simulation, modeling compute time, data transfers, multi-hop routing, bandwidth sharing, and WiFi interference.

- The engine produces a JSONL trace (every event) and JSON metrics (summary statistics including makespan, utilization).

- You can analyze the output with the included

analyze_trace.pyscript, feed it into the web visualization UI, or process it with your own tools.

Project Information¶

| Repository | github.com/ANRGUSC/ncsim |

| PyPI package | anrg-ncsim |

| License | MIT |

| Python | 3.10+ |

| Contributors | Bhaskar Krishnamachari, Maya Gutierrez |

| Organization | Autonomous Networks Research Group (ANRG), University of Southern California |

Citation

If you use ncsim in your research, please cite it. See the CITATION.cff file in the repository for the recommended citation format.

Getting Started

Installation¶

This guide walks through installing ncsim, its dependencies, and the optional web visualization frontend.

Prerequisites¶

Verify that the following tools are installed and meet the minimum version requirements:

| Tool | Minimum Version | Check Command | Notes |

|---|---|---|---|

| Python | 3.10+ | python --version |

Required |

| pip | 21+ | pip --version |

Required |

| Git | 2.30+ | git --version |

Required for cloning the repo |

| Node.js | 18+ | node --version |

Viz frontend only |

| npm | 9+ | npm --version |

Viz frontend only |

Node.js is optional

Node.js and npm are only required if you plan to use the web visualization UI. The core simulator and CLI work with just Python.

Clone the Repository¶

The recommended way to get started is to clone the full repository. This gives you the example scenarios, experiment scripts, documentation, and web visualization UI:

Install ncsim¶

Editable Install (Recommended)¶

Install ncsim in editable (development) mode so that changes to the source code take effect immediately:

This installs the following dependencies automatically:

| Package | Version | Purpose |

|---|---|---|

| anrg-saga | >= 2.0.3 | HEFT, CPOP, and Round Robin scheduling algorithms |

| networkx | >= 3.0 | Graph data structures for routing and conflict graphs |

| pyyaml | >= 6.0 | YAML scenario file parsing |

To also install development dependencies (pytest, pytest-cov), use:

PyPI Install (Core Only)¶

Alternatively, install just the core simulator package from PyPI:

PyPI install does not include extras

The pip install anrg-ncsim command installs only the core simulator library and the ncsim CLI. It does not include the example scenarios, experiment scripts, visualization UI, or documentation. Use this option if you want to integrate ncsim as a library in your own project and will write your own scenario YAML files.

Verify the CLI¶

After installation, confirm that the ncsim command is available:

Expected output:

Install the Visualization Backend¶

The visualization backend is a FastAPI server that connects the web UI to ncsim. Install its dependencies from the viz/server/ directory:

This installs:

| Package | Version | Purpose |

|---|---|---|

| FastAPI | >= 0.115.0 | REST API framework |

| uvicorn | >= 0.34.0 | ASGI server |

Install the Visualization Frontend¶

The frontend is a React application built with Vite. Install its Node.js dependencies:

Stay in the project root

After running npm install, return to the project root directory so that subsequent ncsim commands work with the correct relative paths to scenario files.

Verify the Installation¶

Verify the CLI¶

Run the included demo_simple scenario to confirm the simulator works end to end:

You should see output ending with:

=== Simulation Complete ===

Scenario: Simple Demo

Scheduler: heft

Routing: direct

Interference: proximity

radius=15.0

Seed: 42

Makespan: 3.000000 seconds

Total events: 7

Status: completed

Confirm that three output files were created:

Verify the Visualization¶

Start the backend and frontend in two separate terminals:

Open http://localhost:5173 in your browser. You should see the ncsim-viz interface with a Configure & Run panel.

Run the Test Suite¶

To run the full test suite and confirm everything is functioning correctly:

Or equivalently:

The test suite includes unit tests for the event queue, execution engine, scheduling, routing, WiFi physics, interference models, and end-to-end acceptance tests.

Troubleshooting¶

1. ModuleNotFoundError: No module named 'ncsim'¶

Cause: ncsim is not installed in your current Python environment.

Fix: Run pip install -e . from the repository root. If you are using a virtual environment, make sure it is activated first.

# Activate your virtual environment (if using one)

source venv/bin/activate # Linux/macOS

venv\Scripts\activate # Windows

# Install ncsim

pip install -e .

2. ncsim: command not found¶

Cause: The pip scripts directory is not on your system PATH.

Fix: Either add pip's script directory to your PATH, or invoke ncsim as a Python module:

# Option A: Run as a module

python -m ncsim --scenario scenarios/demo_simple.yaml --output results/test

# Option B: Find and add the scripts directory

python -m site --user-base

# Add the bin/ (Linux/macOS) or Scripts/ (Windows) subdirectory to your PATH

3. Frontend shows "Network Error" when running an experiment¶

Cause: The FastAPI backend server is not running, or it is running on a different port than expected.

Fix: Start the backend in a separate terminal:

Confirm it is listening on http://localhost:8000 before using the frontend.

4. Port 8000 or 5173 is already in use¶

Cause: Another process is occupying the port.

Fix: Find and stop the conflicting process, or run on a different port:

5. npm install fails with errors¶

Cause: Node.js version is too old. The frontend requires Node.js 18 or later.

Fix: Update Node.js to version 18+ and try again:

node --version # Check current version

# Update using your package manager, nvm, or download from https://nodejs.org/

nvm install 18 # If using nvm

npm install # Retry

6. Visualization shows no experiments / empty experiment list¶

Cause: The viz/public/sample-runs/ directory is missing or contains no experiment results.

Fix: Run a simulation with output directed to the sample-runs directory, or copy an existing results folder there:

Then refresh the visualization in your browser.

7. ImportError: cannot import name ... from 'saga'¶

Cause: The anrg-saga package is not installed, or an incompatible version is installed.

Fix: Install or upgrade anrg-saga to version 2.0.3 or later:

If you have a different package named saga installed, it may conflict. Uninstall it first:

Next Steps¶

With ncsim installed, head to the Quick Start guide to run your first simulation in under five minutes.

Quick Start¶

Run your first ncsim simulation in five minutes. This guide assumes you have already completed the Installation steps.

Step 1: Run Your First Simulation¶

The repository includes several example scenarios in the scenarios/ directory. Start with the simplest one -- a two-node network with a two-task DAG:

You should see the following terminal output:

=== Simulation Complete ===

Scenario: Simple Demo

Scheduler: heft

Routing: direct

Interference: proximity

radius=15.0

Seed: 42

Makespan: 3.000000 seconds

Total events: 7

Status: completed

What just happened?

ncsim loaded the scenario, used the HEFT scheduler to assign two tasks to nodes, ran a discrete-event simulation, and produced output files with the full event trace and summary metrics. The makespan (3.0 seconds) is the total time from the start of the first task to the completion of the last task.

Step 2: Examine the Output Files¶

Every simulation run produces three files in the output directory:

| File | Format | Contents |

|---|---|---|

scenario.yaml |

YAML | Copy of the input scenario (for reproducibility) |

trace.jsonl |

JSONL | Every simulation event, one JSON object per line |

metrics.json |

JSON | Summary metrics: makespan, utilization, task/transfer counts |

Trace File (trace.jsonl)¶

The trace file records every event in chronological order. Each line is a self-contained JSON object with a sequence number, simulation time, and event type:

{"sim_time":0.0,"type":"sim_start","trace_version":"1.0","seed":42,"scenario":"demo_simple.yaml","seq":0}

{"sim_time":0.0,"type":"dag_inject","dag_id":"dag_1","task_ids":["T0","T1"],"seq":1}

{"sim_time":0.0,"type":"task_scheduled","dag_id":"dag_1","task_id":"T0","node_id":"n0","seq":2}

{"sim_time":0.0,"type":"task_start","dag_id":"dag_1","task_id":"T0","node_id":"n0","seq":3}

{"sim_time":1.0,"type":"task_complete","dag_id":"dag_1","task_id":"T0","node_id":"n0","duration":1.0,"seq":4}

{"sim_time":1.0,"type":"task_scheduled","dag_id":"dag_1","task_id":"T1","node_id":"n0","seq":5}

{"sim_time":1.0,"type":"task_start","dag_id":"dag_1","task_id":"T1","node_id":"n0","seq":6}

{"sim_time":3.0,"type":"task_complete","dag_id":"dag_1","task_id":"T1","node_id":"n0","duration":2.0,"seq":7}

{"sim_time":3.0,"type":"sim_end","status":"completed","makespan":3.0,"total_events":8,"seq":8}

The event types you will encounter are:

| Event Type | Description |

|---|---|

sim_start |

Simulation begins; records scenario name, seed, trace version |

dag_inject |

A DAG is injected into the simulation with its list of task IDs |

task_scheduled |

A task is assigned to a specific node by the scheduler |

task_start |

A task begins executing on its assigned node |

task_complete |

A task finishes executing; includes duration |

transfer_start |

A data transfer begins between tasks across a link |

transfer_complete |

A data transfer finishes; includes duration |

sim_end |

Simulation ends; records final status and makespan |

Metrics File (metrics.json)¶

The metrics file provides a high-level summary of the simulation run:

{

"scenario": "demo_simple.yaml",

"seed": 42,

"makespan": 3.0,

"total_tasks": 2,

"total_transfers": 1,

"total_events": 7,

"status": "completed",

"node_utilization": {

"n0": 1.0,

"n1": 0.0

},

"link_utilization": {

"l01": 0.0

}

}

Utilization

Node utilization is the fraction of the makespan during which a node is actively executing a task. Link utilization is the fraction of the makespan during which a link is carrying data. In this example, HEFT assigned both tasks to node n0, so n0 has 100% utilization, n1 has 0%, and link l01 was never used.

Step 3: Override Settings from the CLI¶

Scenario YAML files define default settings (scheduler, routing, seed), but you can override any of them from the command line. Try running the same scenario with a different scheduler, routing algorithm, and seed:

ncsim --scenario scenarios/demo_simple.yaml --output results/demo-cpop \

--scheduler cpop --routing widest_path --seed 123

=== Simulation Complete ===

Scenario: Simple Demo

Scheduler: cpop

Routing: widest_path

Interference: proximity

radius=15.0

Seed: 123

Makespan: 3.000000 seconds

Total events: 7

Status: completed

In this simple two-node case, both HEFT and CPOP produce the same makespan because the optimal strategy is to run both tasks on the faster node. The differences become significant on larger topologies.

The full set of CLI overrides:

| Flag | Values | Description |

|---|---|---|

--scheduler |

heft, cpop, round_robin, manual |

Scheduling algorithm |

--routing |

direct, widest_path, shortest_path |

Routing algorithm |

--interference |

none, proximity, csma_clique, csma_bianchi |

Interference model |

--interference-radius |

float | Radius for proximity interference (meters) |

--seed |

integer | Random seed for deterministic results |

--wifi-standard |

n, ac, ax |

WiFi standard for MCS rate tables |

--tx-power |

float (dBm) | WiFi transmit power |

--freq |

float (GHz) | WiFi carrier frequency |

--path-loss-exponent |

float | Path loss exponent |

--rts-cts |

flag | Enable RTS/CTS mechanism |

--verbose / -v |

flag | Enable debug-level logging |

Step 4: Try a More Complex Scenario¶

The parallel_spread.yaml scenario demonstrates the impact of routing on a multi-node topology. It defines 5 nodes in a line with 8 parallel tasks:

=== Simulation Complete ===

Scenario: Parallel Spread (Bidirectional)

Scheduler: heft

Routing: direct

Interference: proximity

radius=15.0

Seed: 42

Makespan: 35.348333 seconds

Total events: 51

Status: completed

Now run the same scenario with widest-path routing, which enables the scheduler to spread tasks across all 5 nodes via multi-hop paths:

=== Simulation Complete ===

Scenario: Parallel Spread (Bidirectional)

Scheduler: heft

Routing: widest_path

Interference: proximity

radius=15.0

Seed: 42

Makespan: 24.246722 seconds

Total events: 55

Status: completed

31% faster with widest-path routing

With direct routing, HEFT can only assign tasks to nodes that have a direct link to the task's data source, limiting it to 3 adjacent nodes. Widest-path routing enables multi-hop transfers, so HEFT can spread the 8 parallel tasks across all 5 nodes -- reducing the makespan from 35.3s to 24.2s.

Step 5: Analyze the Trace¶

The included analyze_trace.py script provides quick text-based analysis of trace files. Use the --timeline flag for a chronological event log and --gantt flag for an ASCII Gantt chart:

=== Event Timeline ===

[ 0.0000] sim_start scenario=demo_simple.yaml

[ 0.0000] dag_inject dag=dag_1, tasks=['T0', 'T1']

[ 0.0000] task_scheduled T0 on n0

[ 0.0000] task_start T0 on n0

[ 1.0000] task_complete T0 on n0 (duration=1.0)

[ 1.0000] task_scheduled T1 on n0

[ 1.0000] task_start T1 on n0

[ 3.0000] task_complete T1 on n0 (duration=2.0)

[ 3.0000] sim_end makespan=3.0

=== Execution Gantt Chart ===

Time: 0 3.00s

|============================================================|

n0 |#################### | T0 (1.000s)

n0 | ########################################| T1 (2.000s)

|============================================================|

Legend: # = task execution, ~ = data transfer

The analysis script supports three views:

| Flag | Description |

|---|---|

--timeline |

Chronological event log with timestamps |

--gantt |

ASCII Gantt chart showing task execution and data transfers |

--tasks |

Per-task detail: scheduled time, start time, completion time, duration, wait time |

You can combine flags, or run with no flags to get a default summary plus Gantt chart.

What's Next?¶

Now that you have run your first simulations, explore the rest of the documentation:

- Core Concepts: Architecture -- understand the simulation engine, event queue, and execution model

- Core Concepts: Scheduling -- learn how HEFT, CPOP, and Round Robin assign tasks to nodes

- Scenarios: YAML Reference -- full specification of the scenario file format for writing your own scenarios

- Scenarios: Scenario Gallery -- browse the 10 included example scenarios with descriptions and expected results

- Visualization: Overview -- set up the web UI and explore results interactively with Gantt charts, animated replay, and network topology views

- Tutorial 2: Custom Scenario -- build a scenario from scratch

- Tutorial 3: WiFi Experiment -- configure 802.11 interference models

- Tutorial 4: Compare Schedulers -- run batch experiments comparing scheduling algorithms

Core Concepts

Architecture¶

ncsim is a headless discrete event simulator for networked computing, designed around pluggable abstractions for scheduling, routing, and interference modeling. This page describes the package structure, data flow, key abstractions, and the optional visualization frontend.

Package Structure¶

ncsim/

├── main.py # CLI entry point (argparse, orchestration)

├── core/

│ ├── simulation.py # Main simulation loop (Simulation, SimulationResult)

│ ├── event_queue.py # Priority queue with deterministic ordering

│ ├── execution_engine.py # Event handlers, node/link state management

│ └── telemetry.py # Pluggable telemetry collectors

├── models/

│ ├── network.py # Node, Link, Position, Network dataclasses

│ ├── task.py # Task, TaskState, TaskStatus, FIFOQueueModel

│ ├── dag.py # DAG, Edge, DAGSource ABC

│ ├── routing.py # RoutingModel ABC + 3 implementations

│ ├── interference.py # InterferenceModel ABC + 4 implementations

│ └── wifi.py # 802.11 RF physics (PHY rates, conflict graph, Bianchi)

├── scheduler/

│ ├── base.py # Scheduler ABC, PlacementPlan, RoundRobinScheduler

│ └── saga_adapter.py # HEFT/CPOP via anrg-saga library

└── io/

├── scenario_loader.py # YAML parsing -> Scenario object

├── trace_writer.py # JSONL trace output (event stream)

└── results_writer.py # metrics.json output (summary)

Architecture Overview¶

The high-level data flow follows a linear pipeline from YAML input through simulation to structured output files.

flowchart LR

YAML["Scenario YAML"] --> SL["ScenarioLoader"]

SL --> SIM["Simulation"]

SIM --> TW["TraceWriter"]

SIM --> RW["ResultsWriter"]

TW --> TRACE["trace.jsonl"]

RW --> METRICS["metrics.json"]Simulation Pipeline¶

The simulation proceeds through seven distinct phases. Each phase transforms or consumes the output of the previous one.

flowchart TD

A["1. Load<br/>ScenarioLoader reads YAML<br/>-> Scenario with Network, DAGs, Config"] --> B

B["2. Configure<br/>CLI overrides applied<br/>(--scheduler, --routing, --interference, --seed)"] --> C

C["3. Wire<br/>Simulation constructed with<br/>Scheduler, DAGSource,<br/>RoutingModel, InterferenceModel"] --> D

D["4. Inject<br/>DAGs injected at inject_at times<br/>Scheduler returns PlacementPlan<br/>for each DAG"] --> E

E["5. Execute<br/>Event loop: pop from priority queue<br/>ExecutionEngine handles each event<br/>New events scheduled as side effects"] --> F

F["6. Trace<br/>Events forwarded to TraceWriter<br/>-> trace.jsonl (one JSON object per line)"] --> G

G["7. Results<br/>Makespan, utilization, status<br/>-> metrics.json"]Phase Details¶

1. Load. The ScenarioLoader reads a YAML file and produces a

Scenario object containing a Network (nodes + links), a list of

DAG objects (tasks + edges), and a ScenarioConfig with defaults for

scheduler, routing, interference, and seed.

2. Configure. CLI arguments such as --scheduler heft,

--routing widest_path, or --interference csma_bianchi override the

values from the YAML config section. The --seed flag overrides the

scenario seed for reproducibility experiments.

3. Wire. The Simulation object is constructed, which internally

creates an EventQueue and an ExecutionEngine. The engine receives

handles to the Network, Scheduler, RoutingModel, and optionally an

InterferenceModel.

4. Inject. The DAGSource (either SingleDAGSource or

MultiDAGSource) provides DAGs at their specified inject_at times. For

each DAG, a DAG_INJECT event is placed on the queue. When that event is

processed, the scheduler's on_dag_inject method is called, returning a

PlacementPlan that maps every task to a node.

5. Execute. The main loop pops events from the priority queue one at a

time. Each event is dispatched to the appropriate handler in the

ExecutionEngine, which may schedule new events as side effects. The loop

continues until the queue is empty.

6. Trace. A TraceEventAdapter listens to every processed event and

writes structured records to a JSONL file via TraceWriter. Each record

includes a sequence number, simulation time, event type, and

event-specific fields.

7. Results. After the loop completes, the ResultsWriter computes

makespan, per-node utilization, per-link utilization, and simulation

status, then writes everything to metrics.json.

Key Abstractions¶

ncsim uses abstract base classes (ABCs) at every extension point. Swapping behavior requires only implementing the ABC and selecting it via CLI flag or YAML config.

| Abstraction | Interface | Implementations | Configured by |

|---|---|---|---|

| Scheduler | on_dag_inject(dag, snapshot) -> PlacementPlan |

RoundRobinScheduler, ManualScheduler, SagaScheduler (HEFT, CPOP) |

--scheduler |

| RoutingModel | get_path(src, dst, network) -> [link_ids] |

DirectLinkRouting, WidestPathRouting, ShortestPathRouting |

--routing |

| InterferenceModel | get_interference_factor(link, actives, net) -> float |

NoInterference, ProximityInterference, CsmaCliqueInterference, CsmaBianchiInterference |

--interference |

| DAGSource | get_next_injection(after_time) -> (time, dag) |

SingleDAGSource, MultiDAGSource |

Scenario YAML |

| TelemetryCollector | on_event(event, engine) |

TraceOnlyCollector, FullStateCollector |

Internal |

| QueueModel | enqueue(task), dequeue() -> task |

FIFOQueueModel |

Internal |

Scheduler¶

The scheduler decides where tasks run. It receives a DAG and a

NetworkSnapshot (read-only view of nodes and links with capacities and

bandwidths), and returns a PlacementPlan mapping every task ID to a node

ID. The execution engine decides when tasks run based on event

ordering and node availability.

Pinned tasks

Any task with a pinned_to field in the YAML overrides the

scheduler's assignment. This works with all schedulers, including

HEFT and CPOP.

RoutingModel¶

The routing model determines the path (sequence of link IDs) for

data transfers between nodes. DirectLinkRouting requires an explicit

link and fails if none exists. WidestPathRouting finds the path that

maximizes bottleneck bandwidth using modified Dijkstra. ShortestPathRouting

minimizes total latency using standard Dijkstra.

InterferenceModel¶

The interference model computes a multiplicative factor in (0, 1] applied

to a link's base bandwidth when other links are simultaneously active.

This is orthogonal to per-link fair sharing: if a link has base bandwidth

B, interference factor f, and N concurrent transfers, each transfer gets

(B * f) / N.

DAGSource¶

A DAGSource provides DAGs for injection into the simulation at specified

times. SingleDAGSource injects one DAG. MultiDAGSource injects

multiple DAGs sorted by their inject_at times.

Extensibility¶

Adding new models

To add a new scheduling algorithm, routing model, or interference model, implement the corresponding ABC and register it in the CLI argument choices and factory function.

The ABC-based architecture supports the following future extensions without modifying the core simulation loop:

- RL-based scheduling -- Implement

Scheduler.on_dag_injectwith a trained policy network. - Preemptive tasks -- Extend

QueueModelwith priority-based preemption; theTaskStatealready trackscompute_remaining. - TDMA links -- Implement a

LinkModelthat returns time-varying bandwidth based on slot schedules. TheEventTypeenum already reservesTDMA_SLOT_START. - Mobility -- Schedule

MOBILITY_UPDATEevents that recompute positions and update link bandwidths. The event type is already reserved. - Jamming / disruptions -- Schedule

LINK_STATE_CHANGEevents that degrade or disable links mid-simulation.

Visualization Architecture¶

ncsim includes an optional web-based visualization frontend (viz/

directory) for interactive trace playback and scenario editing.

Stack¶

| Layer | Technology |

|---|---|

| Frontend | React 19, TypeScript, Vite |

| Layout & graphics | D3.js (network graph), Dagre (DAG layout) |

| Styling | Tailwind CSS 4 |

| Backend | FastAPI + uvicorn (Python) |

| Simulation | ncsim invoked as subprocess |

Communication¶

The frontend development server (Vite, port 5173) proxies all /api/*

requests to the FastAPI backend running on port 8000. The backend

accepts scenario YAML, runs ncsim as a subprocess, and returns the

parsed trace and metrics to the browser.

sequenceDiagram

participant Browser

participant FastAPI

participant ncsim

Browser->>FastAPI: POST /api/run {yaml}

FastAPI->>ncsim: subprocess.run(["ncsim", ...])

ncsim-->>FastAPI: trace.jsonl, metrics.json

FastAPI-->>Browser: {scenario, trace, metrics}The browser receives the full simulation output in a single response and renders an interactive timeline with network topology, DAG structure, and event-by-event playback.

Simulation Model¶

ncsim uses a discrete event simulation (DES) to model task execution and data transfer across a networked computing environment. This page covers the event model, time management, compute and transfer calculations, and bandwidth sharing mechanics.

Discrete Event Simulation Basics¶

A discrete event simulation advances time by jumping from one event to the next, rather than stepping through fixed time increments. The core loop is:

- Pop the highest-priority event from the queue.

- Advance the simulation clock to that event's time.

- Execute the event handler, which may schedule new events.

- Repeat until the queue is empty.

No fixed time step

Unlike tick-based simulations, DES skips idle periods entirely. A simulation with two events at t=0.0 and t=100.0 processes only those two events, regardless of the 100-second gap between them.

This approach gives ncsim exact timing for task completions and data transfers without discretization error.

Event Types¶

ncsim defines six core event types, each with a fixed priority value. Lower priority values are processed first when multiple events occur at the same simulation time.

| Event Type | Priority | Description |

|---|---|---|

DAG_INJECT |

0 | A new DAG arrives and is handed to the scheduler |

TASK_COMPLETE |

1 | A task finishes execution on its assigned node |

TRANSFER_COMPLETE |

2 | A data transfer finishes on its link path |

TASK_READY |

3 | All predecessors of a task are satisfied |

TASK_START |

4 | A task begins execution on a node |

TRANSFER_START |

5 | A data transfer begins on a link |

Why completions come before starts

At the same simulation time, completions must be processed before starts. When a task completes, it frees its node and triggers output transfers. Those transfers may complete instantly (same-node), making a downstream task ready. If starts were processed first, the downstream task could miss being scheduled at the correct time.

Four additional event types are reserved for future extensions:

MOBILITY_UPDATE (100), LINK_STATE_CHANGE (101),

RESCHEDULE_TRIGGER (102), and TDMA_SLOT_START (103). Their handlers

currently no-op.

Event Ordering¶

Events are stored in a min-heap (priority queue) and ordered by a three-element sort key:

| Component | Purpose |

|---|---|

round(sim_time, 6) |

Microsecond precision avoids floating-point comparison issues |

event_type.priority |

Ensures correct causal ordering at the same time instant |

event_id |

Monotonically increasing counter guarantees FIFO order for ties |

This three-level ordering guarantees determinism: given the same inputs and the same seed, the simulation produces an identical event sequence every time.

Event Cancellation¶

The queue supports lazy cancellation. When a transfer's completion time is recalculated (due to bandwidth sharing changes), the old completion event is added to a cancelled set. When the queue pops a cancelled event, it silently discards it and pops the next one. This avoids the cost of heap removal while maintaining correctness.

Determinism¶

Reproducibility guarantee

Same scenario YAML + same --seed = identical event sequence,

identical makespan, identical trace output.

Determinism comes from three sources:

- Microsecond rounding -- All times are rounded to 6 decimal places

via

round_time(), eliminating platform-dependent floating-point drift. - Priority-based ordering -- Event type priorities impose a fixed processing order at each time instant.

- Monotonic event IDs -- A global counter breaks all remaining ties in insertion order.

Compute Model¶

Each node has a compute_capacity measured in compute units per second.

Each task has a compute_cost measured in compute units. The execution

time is:

For example, a task with compute_cost: 200 on a node with

compute_capacity: 100 takes 2.0 seconds.

Node Queuing¶

Each node runs at most one task at a time (single-server model). When a task becomes ready and its assigned node is busy, the task enters a FIFO queue on that node. Tasks are dequeued and started in arrival order when the node becomes idle.

No preemption

A running task always completes before any queued task starts. The

TaskState dataclass tracks compute_remaining to support future

preemptive scheduling, but the current engine does not interrupt

running tasks.

Task Lifecycle¶

A task moves through these statuses:

| Status | Meaning |

|---|---|

PENDING |

Waiting for predecessor tasks to complete |

READY |

All predecessors complete; waiting for node availability |

QUEUED |

In the node's FIFO queue (node is busy) |

RUNNING |

Executing on the node |

COMPLETED |

Finished execution |

Transfer Model¶

When a task completes, the engine schedules data transfers for each outgoing edge in the DAG. Transfers move data from the source task's node to the destination task's node over a network path.

Local Transfers¶

If the source and destination tasks are assigned to the same node, no network transfer occurs. The predecessor is marked complete immediately at zero cost.

Network Transfers¶

For tasks on different nodes, the routing model determines a path (sequence of link IDs). The transfer time is:

Where:

data_sizeis the edge'sdata_sizein MB.effective_bandwidthis the bottleneck bandwidth across all links in the path, after accounting for per-link fair sharing and interference.total_latencyis the sum of all link latencies along the path (store-and-forward model).

Multi-Hop Paths¶

For paths with more than one link:

- Bottleneck bandwidth = minimum bandwidth across all links in the path.

- Total latency = sum of latencies across all links (each hop adds its propagation delay).

The store-and-forward model means data must fully arrive at each intermediate node before being forwarded to the next hop.

Bandwidth Sharing¶

When multiple transfers use the same link simultaneously, they share the

link's bandwidth equally. If N transfers share a link with base bandwidth

B (after interference), each gets B / N.

Dynamic recalculation

When a transfer starts or completes on a link, the engine recalculates the completion times of all other active transfers on that link. Old completion events are cancelled and replaced with new ones reflecting the updated bandwidth allocation.

The recalculation accounts for partial progress. When bandwidth changes mid-transfer:

- The engine computes how much data was transferred at the previous rate during the elapsed time.

- It subtracts that from

data_remaining. - It schedules a new completion event based on the remaining data and the new effective rate.

For multi-hop transfers, the effective bandwidth is the minimum across all links in the path, each independently sharing its bandwidth among its concurrent transfers.

Interference Effects on Bandwidth¶

When an InterferenceModel is active, bandwidth sharing becomes a

two-level calculation:

- Interference factor: The model returns a factor f in (0, 1] for each link based on other active links in the network (e.g., nearby wireless transmitters).

- Per-link fair sharing: N concurrent transfers on the link each get

(B * f) / N.

Interference factors are recomputed whenever any transfer starts or completes, and affected transfers have their completion times recalculated.

Example: Two-Task Execution¶

The following diagram shows the event sequence for a simple scenario with two tasks (T0 and T1) where T1 depends on T0 and they are assigned to different nodes.

sequenceDiagram

participant EQ as Event Queue

participant Engine as ExecutionEngine

Note over EQ,Engine: t=0.000000

EQ->>Engine: DAG_INJECT (dag_1)

Note right of Engine: Scheduler assigns T0->n0, T1->n1<br/>Schedules TASK_READY for root task T0

EQ->>Engine: TASK_READY (T0)

Note right of Engine: Node n0 is idle<br/>Schedules TASK_START for T0

EQ->>Engine: TASK_START (T0, n0)

Note right of Engine: runtime = 100 / 100 = 1.0s<br/>Schedules TASK_COMPLETE at t=1.0

Note over EQ,Engine: t=1.000000

EQ->>Engine: TASK_COMPLETE (T0, n0)

Note right of Engine: T0 done. Edge T0->T1 has data_size=50 MB<br/>T0 on n0, T1 on n1: network transfer<br/>Schedules TRANSFER_START

EQ->>Engine: TRANSFER_START (T0->T1, link l01)

Note right of Engine: effective_bw = 100 MB/s, latency = 0.001s<br/>transfer_time = 50/100 + 0.001 = 0.501s<br/>Schedules TRANSFER_COMPLETE at t=1.501

Note over EQ,Engine: t=1.501000

EQ->>Engine: TRANSFER_COMPLETE (T0->T1)

Note right of Engine: T1's last predecessor satisfied<br/>Schedules TASK_READY for T1

EQ->>Engine: TASK_READY (T1)

Note right of Engine: Node n1 is idle<br/>Schedules TASK_START for T1

EQ->>Engine: TASK_START (T1, n1)

Note right of Engine: runtime = 200 / 50 = 4.0s<br/>Schedules TASK_COMPLETE at t=5.501

Note over EQ,Engine: t=5.501000

EQ->>Engine: TASK_COMPLETE (T1, n1)

Note right of Engine: No successors. DAG complete.<br/>Makespan = 5.501000sTracing this locally

Run this exact scenario with:

The verbose flag (-v) logs every event to the console. The

output directory will contain trace.jsonl and metrics.json.

Output Files¶

trace.jsonl¶

One JSON object per line, in event order. Every record has:

| Field | Type | Description |

|---|---|---|

seq |

int | Monotonically increasing sequence number |

sim_time |

float | Simulation time in seconds (6 decimal places) |

type |

string | Event type (dag_inject, task_start, task_complete, transfer_start, transfer_complete, sim_start, sim_end) |

Additional fields vary by event type (e.g., dag_id, task_id,

node_id, link_id, duration, data_size).

metrics.json¶

A single JSON object with summary metrics:

| Field | Type | Description |

|---|---|---|

scenario |

string | Scenario file name |

seed |

int | Random seed used |

makespan |

float | Time of last task completion |

total_tasks |

int | Number of tasks across all DAGs |

total_transfers |

int | Number of data transfer edges |

total_events |

int | Total events processed |

status |

string | "completed" or "error" |

node_utilization |

object | Per-node busy_time / makespan (0.0 to 1.0) |

link_utilization |

object | Per-link data_transferred / (bandwidth * makespan) |

Scheduling Algorithms¶

ncsim supports three scheduling algorithms that decide where each

task runs. The scheduler receives a DAG and a snapshot of the network

(node capacities, link bandwidths), and returns a PlacementPlan

mapping every task to a node. The execution engine then decides

when tasks run based on event ordering and node availability.

Select a scheduler via CLI:

Or in the scenario YAML:

HEFT (Heterogeneous Earliest Finish Time)¶

HEFT is a list-scheduling heuristic designed for heterogeneous computing environments. It is the default scheduler in ncsim and generally produces the best makespans.

Algorithm¶

-

Compute upward rank for each task. The upward rank is the longest path (by computation + communication cost) from the task to any exit task in the DAG. Tasks with higher upward rank are scheduled first.

-

Sort tasks by decreasing upward rank. This ordering ensures that tasks on the critical path are considered before less important tasks.

-

For each task (in rank order), evaluate every node and select the one that gives the earliest finish time (EFT). The finish time accounts for:

- The task's execution time on that node (

compute_cost / compute_capacity). - The data transfer time for all incoming edges from predecessor tasks already placed on other nodes.

- The node's availability (when it becomes idle after finishing its currently assigned workload).

- The task's execution time on that node (

Communication awareness

HEFT accounts for the cost of data transfers between nodes. If two communicating tasks are placed on the same node, the transfer cost is zero. This means HEFT will naturally co-locate tightly coupled tasks when the communication cost outweighs the benefit of faster remote execution.

When to Use HEFT¶

- General-purpose default for heterogeneous networks.

- Networks where nodes have different compute capacities.

- DAGs with mixed computation and communication requirements.

CPOP (Critical Path on a Processor)¶

CPOP is a variant of list scheduling that identifies the DAG's critical path and concentrates those tasks on the single fastest processor.

Algorithm¶

-

Compute upward rank and downward rank for each task. The downward rank is the longest path from the entry task to the current task.

-

Compute priority as the sum of upward and downward rank. Tasks on the critical path all share the same maximum priority value.

-

Identify critical-path tasks -- tasks whose priority equals the maximum.

-

Assign critical-path tasks to the single processor (node) that minimizes the total critical-path execution time.

-

Assign non-critical tasks using the EFT heuristic (same as HEFT).

When to Use CPOP¶

- DAGs with a dominant critical path (one long chain of dependent tasks).

- Networks with one node significantly faster than the others, where running the critical path entirely on that node avoids inter-node transfer overhead.

CPOP can underperform HEFT

If the DAG has multiple paths of similar length, CPOP's strategy of concentrating on a single critical path may leave the fast processor overloaded while other nodes sit idle. In such cases, HEFT's per-task EFT approach tends to produce better makespans.

Round Robin¶

Round Robin assigns tasks to nodes in simple cyclic order. It is communication-unaware and heterogeneity-unaware.

Algorithm¶

- Order tasks topologically (predecessors before successors).

- Cycle through nodes: task 0 goes to node 0, task 1 to node 1, task 2 to node 0, and so on.

Baseline only

Round Robin ignores compute capacities, data dependencies, and transfer costs. It exists solely as a baseline for comparing against intelligent schedulers. Do not use it for performance- sensitive simulations.

Comparison¶

| Feature | HEFT | CPOP | Round Robin |

|---|---|---|---|

| Task ordering | Upward rank (descending) | Priority = upward + downward rank | Topological (insertion order) |

| Node selection | Earliest Finish Time (EFT) across all nodes | Critical-path tasks to fastest node; others to min EFT | Cyclic assignment |

| Communication-aware | Yes | Yes | No |

| Heterogeneity-aware | Yes | Yes | No |

| Best for | General case | Dominant critical path + one fast node | Baseline comparisons |

| Library | anrg-saga | anrg-saga | Built-in |

Manual Assignment (pinned_to)¶

Tasks can be pinned to specific nodes using the pinned_to field in the

scenario YAML. This bypasses the scheduler's decision for that task.

dags:

- id: dag_1

tasks:

- id: T0

compute_cost: 100

pinned_to: n0 # Force T0 onto node n0

- id: T1

compute_cost: 200

pinned_to: n1 # Force T1 onto node n1

- id: T2

compute_cost: 150 # No pin -- scheduler decides

edges:

- { from: T0, to: T2, data_size: 10 }

- { from: T1, to: T2, data_size: 20 }

Pinned tasks work with any scheduler:

- With

--scheduler manual, all tasks must havepinned_toset (tasks without it are assigned to the first node with a warning). - With

--scheduler heftor--scheduler cpop, pinned tasks override the scheduler's choice. Unpinned tasks are scheduled normally. - With

--scheduler round_robin, pinned tasks override the cyclic assignment.

Testing specific placements

Manual assignment is useful for validating simulation correctness against hand-calculated expected results, or for testing how a specific placement performs under different interference or routing models.

Example: Same DAG, Different Schedulers¶

Consider a diamond-shaped DAG with four tasks on a two-node network:

scenario:

name: "Diamond DAG"

network:

nodes:

- id: fast

compute_capacity: 100

- id: slow

compute_capacity: 50

links:

- id: link_fs

from: fast

to: slow

bandwidth: 10

latency: 0.001

- id: link_sf

from: slow

to: fast

bandwidth: 10

latency: 0.001

dags:

- id: diamond

tasks:

- { id: A, compute_cost: 100 } # Entry

- { id: B, compute_cost: 200 } # Left branch

- { id: C, compute_cost: 200 } # Right branch

- { id: D, compute_cost: 100 } # Exit (merge)

edges:

- { from: A, to: B, data_size: 5 }

- { from: A, to: C, data_size: 5 }

- { from: B, to: D, data_size: 5 }

- { from: C, to: D, data_size: 5 }

HEFT Placement¶

HEFT computes upward ranks and assigns each task to the node giving the earliest finish time:

| Task | Upward Rank | Assignment | Rationale |

|---|---|---|---|

| A | highest | fast | Fastest execution for the entry task |

| B | mid | fast | Co-locating with A avoids transfer cost |

| C | mid | slow | Runs in parallel with B on the other node |

| D | lowest | fast | Fastest node for the exit merge |

Because B and C can run in parallel on different nodes, HEFT overlaps their execution. The makespan is dominated by the critical path A -> B -> D (or A -> C -> D, whichever is longer after accounting for transfer times).

CPOP Placement¶

CPOP identifies the critical path (e.g., A -> B -> D) and assigns all

three to fast. Task C (non-critical) goes to slow via EFT.

| Task | On Critical Path | Assignment |

|---|---|---|

| A | Yes | fast |

| B | Yes | fast |

| C | No | slow |

| D | Yes | fast |

This avoids transfers along the critical path (A, B, D all on the same node), but C must transfer its output to D across the network.

Round Robin Placement¶

Round Robin simply cycles: A -> fast, B -> slow, C -> fast, D -> slow.

| Task | Assignment | Rationale |

|---|---|---|

| A | fast | First in cycle |

| B | slow | Second in cycle |

| C | fast | Third in cycle (wraps) |

| D | slow | Fourth in cycle |

This placement is communication-unaware. Every edge in the DAG requires a network transfer, and the slow node bottlenecks task execution. The resulting makespan is significantly worse than HEFT or CPOP.

Makespan Comparison¶

Running the three schedulers on the diamond scenario above produces different makespans. The exact values depend on bandwidth and latency parameters, but the relative ranking is consistent:

# Run with each scheduler

ncsim --scenario diamond.yaml --output out/heft --scheduler heft

ncsim --scenario diamond.yaml --output out/cpop --scheduler cpop

ncsim --scenario diamond.yaml --output out/rr --scheduler round_robin

| Scheduler | Typical Makespan Ranking |

|---|---|

| HEFT | Best (parallelism + communication-aware) |

| CPOP | Close to HEFT (critical path optimized) |

| Round Robin | Worst (no optimization) |

SAGA Library Integration¶

HEFT and CPOP are implemented via the

anrg-saga library. ncsim's

SagaScheduler adapter translates between ncsim's data model and SAGA's:

-

Network translation -- SAGA requires a fully-connected graph. The adapter creates edges for all node pairs:

LOCAL_SPEED(10000 MB/s) for same-node, actual link bandwidth for connected pairs, widest-path bandwidth for multi-hop pairs (when--routing widest_pathor--routing shortest_pathis active), andDISCONNECTED_SPEED(0.001 MB/s) for unreachable pairs. -

Task graph translation -- DAG tasks become

TaskGraphNodeobjects withcost = compute_cost. DAG edges becomeTaskGraphEdgeobjects withsize = data_size. -

Result extraction -- SAGA's schedule maps internal node names (

node_0,node_1) to task lists. The adapter maps these back to actual node IDs.

Routing Modes¶

ncsim supports three routing modes that determine how data flows between nodes

in the network. The routing mode is set via the routing key in the scenario

YAML or the --routing CLI flag.

Direct Routing (direct)¶

Single-hop routing that transfers data only on a direct link between the source and destination nodes.

- No multi-hop: if no direct link exists between two nodes, the transfer fails immediately.

- Simplest and fastest: no path computation, no caching overhead.

- Best for: topologies where every communicating pair of nodes has a dedicated link.

Transfer failure

Direct routing will fail any transfer where the source and destination

are not connected by a single declared link. If your scheduler may assign

communicating tasks to non-adjacent nodes, use widest_path or

shortest_path instead.

Widest Path Routing (widest_path)¶

Routes data along the path that maximizes the bottleneck bandwidth (max-min bandwidth). This is the optimal choice when transfer size dominates total transfer time.

- Algorithm: modified Dijkstra using a max-heap. At each step the

algorithm relaxes edges by taking

min(current_bottleneck, link_bandwidth), keeping the path whose minimum-bandwidth link is largest. - Multi-hop: intermediate nodes act as store-and-forward relays.

- Caching: paths are computed once and cached for the lifetime of the

simulation. Call

clear_cache()if the topology changes. - Transfer model: bottleneck bandwidth determines transfer rate; latencies are summed across all hops (store-and-forward).

Shortest Path Routing (shortest_path)¶

Routes data along the path that minimizes total latency (sum of per-link latencies). When all links have equal latency this degenerates to minimum hop count.

- Algorithm: standard Dijkstra on link latencies (min-heap).

- Multi-hop: intermediate nodes act as store-and-forward relays.

- Caching: paths are computed once and cached.

- Transfer model: latencies are summed; bottleneck bandwidth along the chosen path determines transfer rate.

Comparison¶

| Feature | Direct | Widest Path | Shortest Path |

|---|---|---|---|

| Algorithm | Direct lookup | Modified Dijkstra (max-min BW) | Standard Dijkstra (min latency) |

| Multi-hop | No | Yes | Yes |

| Optimizes | N/A | Bottleneck bandwidth | Total latency |

| Fails when | No direct link exists | No path exists | No path exists |

| Path caching | N/A | Yes | Yes |

| Best for | Simple topologies | Large transfers | Small / latency-sensitive transfers |

When Widest and Shortest Diverge¶

The two multi-hop modes choose different paths whenever a topology offers a trade-off between bandwidth and latency. Consider a diamond topology with two relay nodes:

graph LR

src -- "BW=20 MB/s<br/>lat=0.001s" --> relay_fast

relay_fast -- "BW=20 MB/s<br/>lat=0.001s" --> dst

src -- "BW=200 MB/s<br/>lat=0.05s" --> relay_wide

relay_wide -- "BW=200 MB/s<br/>lat=0.05s" --> dst

style relay_fast fill:#cce5ff,stroke:#004085

style relay_wide fill:#d4edda,stroke:#155724Shortest path picks src -> relay_fast -> dst (total latency 0.002 s,

bottleneck BW 20 MB/s).

Widest path picks src -> relay_wide -> dst (total latency 0.1 s,

bottleneck BW 200 MB/s).

Worked Example -- 100 MB Transfer¶

This corresponds to the widest_vs_shortest.yaml scenario, where tasks T0

(on src) and T1 (on dst) are connected by a 100 MB data edge. Each task

has compute_cost: 100 on nodes with compute_capacity: 100, so each task

takes 1.0 s to execute.

| Shortest Path | Widest Path | |

|---|---|---|

| Path | src -> relay_fast -> dst | src -> relay_wide -> dst |

| Bottleneck BW | 20 MB/s | 200 MB/s |

| Total latency | 0.002 s | 0.1 s |

| Transfer time | 100 / 20 + 0.002 = 5.002 s | 100 / 200 + 0.1 = 0.6 s |

| Makespan | 1.0 + 5.002 + 1.0 = 7.002 s | 1.0 + 0.6 + 1.0 = 2.6 s |

Widest-path routing is approximately 2.7x faster for this large transfer because the data volume dominates the total time, making bandwidth the controlling factor.

Rule of thumb

Use widest_path when transfer sizes are large relative to link

latencies. Use shortest_path when transfers are small and latency

dominates.

When Multi-hop Routing Is Needed¶

Why multi-hop matters

Multi-hop routing is required whenever the scheduler assigns communicating

tasks to non-adjacent nodes -- that is, nodes with no direct link

between them. Without widest_path or shortest_path routing, the

transfer will fail because direct routing cannot relay through

intermediate nodes.

This commonly occurs with HEFT and CPOP schedulers, which choose node assignments based on compute speed and may place dependent tasks on nodes that are not directly connected.

YAML Configuration¶

Routing is specified in the config section:

Or overridden on the command line:

Interference Models¶

Interference models reduce the effective bandwidth of a link when multiple

nearby links are simultaneously active. This inter-link contention is applied

on top of per-link fair sharing (where N flows on the same link each get

bandwidth / N).

The combined formula for a single flow on a link is:

The interference model is selected via the interference key in the scenario

YAML or the --interference CLI flag.

None (none)¶

No inter-link interference. The interference factor is always 1.0.

- Links operate at their full declared bandwidth regardless of concurrent transfers on other links.

- Appropriate for wired networks or scenarios where spectrum contention is not relevant.

Proximity (proximity)¶

A simple distance-based interference model. Links whose geometric midpoints fall within a configurable radius are considered to be contending.

- If k active links (including the link being evaluated) have midpoints

within the radius, the interference factor is

1/k. - Configure the radius with the

interference_radiusYAML key or the--interference-radiusCLI flag (default: 15.0 meters). - Dynamic: the factor is recalculated whenever a transfer starts or completes.

- Provides a quick approximation of spectrum contention without requiring WiFi-specific RF parameters.

CSMA/CA Clique (csma_clique)¶

An 802.11-aware static model that builds a conflict graph based on carrier sensing rules and divides bandwidth by the worst-case clique size.

- Uses RF propagation (path loss, carrier sensing threshold) to determine which links can sense each other's transmissions.

- Links that can sense each other form edges in a conflict graph. The Bron-Kerbosch algorithm (exact for networks with 50 or fewer links; greedy approximation otherwise) finds the maximum clique containing each link.

-

At setup time, each link's bandwidth is set to:

-

After setup, the interference factor is always 1.0 -- contention is baked into the bandwidth value and does not change during simulation.

- Conservative: represents the worst-case bound where all links in the largest clique transmit simultaneously.

- Requires RF parameters (

tx_power_dBm,freq_ghz,path_loss_exponent,cca_threshold_dBm, etc.) via therf:YAML section.

CSMA/CA Bianchi (csma_bianchi)¶

The most realistic WiFi interference model. It dynamically separates two distinct interference mechanisms:

1. Contention Domain (Conflict Graph Neighbors)¶

Links that appear as neighbors in the conflict graph operate under CSMA/CA: they cannot transmit simultaneously. Instead, they share airtime according to Bianchi's saturation throughput model.

Each of n contending links (including the link itself) gets a fraction

eta(n) / n of the channel, where eta(n) is the Bianchi MAC efficiency

for n stations.

Because CSMA prevents simultaneous transmission, contending links do not cause SINR degradation at each other's receivers.

2. Hidden Terminals (Non-Conflict-Graph Neighbors)¶

Active links that are not in the conflict graph of the evaluated link may transmit simultaneously, causing RF interference at the receiver. Their combined interference power degrades the SINR, which may force a lower MCS rate.

The SINR-based rate (R_SINR) is computed using only hidden terminal

interference. The base rate (R_base) is the SNR-only PHY rate.

Combined Factor¶

Where:

n = 1 + |active contending neighbors|eta(n)= Bianchi MAC efficiency for n stationsR_SINR= MCS rate under SINR (hidden terminal degradation)R_base= MCS rate under SNR only (no interference)

The factor is recalculated whenever a transfer starts or completes, making this a fully dynamic model.

config:

interference: csma_bianchi

rf:

tx_power_dBm: 20

freq_ghz: 5.0

path_loss_exponent: 3.0

noise_floor_dBm: -95

cca_threshold_dBm: -82

channel_width_mhz: 20

wifi_standard: "ax"

Key insight

CSMA prevents simultaneous transmission within the contention domain, so

contending links affect throughput via time-sharing, not via SINR

degradation. Only hidden terminals -- links outside the conflict graph --

contribute to SINR reduction. This separation is what distinguishes

csma_bianchi from simpler models.

Comparison¶

| Feature | None | Proximity | CSMA Clique | CSMA Bianchi |

|---|---|---|---|---|

| Interference type | None | Distance-based | Carrier sensing | SINR + MAC |

| Dynamic | N/A | Yes | No (static) | Yes |

| WiFi-aware | No | No | Yes | Yes |

| RF parameters required | No | No | Yes | Yes |

| Accuracy | N/A | Low | Medium | High |

| Use case | Wired networks | Quick approximation | WiFi without SINR | Realistic WiFi |

Worked Example: Proximity Interference¶

This example uses the interference_test.yaml scenario.

Topology¶

Four nodes with two parallel links, 5 units apart:

graph LR

n0["n0 (0,0)"] -- "l01: BW=100 MB/s" --> n1["n1 (5,0)"]

n2["n2 (0,5)"] -- "l23: BW=100 MB/s" --> n3["n3 (5,5)"]- Link

l01midpoint: (2.5, 0) - Link

l23midpoint: (2.5, 5) - Distance between midpoints: 5.0 units

Two DAG edges create simultaneous transfers: T0 -> T1 on l01 (100 MB) and

T2 -> T3 on l23 (100 MB). Each task has compute_cost: 10 on nodes with

compute_capacity: 1000, so task execution takes 0.01 s.

Without Interference (none)¶

| Transfer | Bandwidth | Time | Total |

|---|---|---|---|

| T0 -> T1 on l01 | 100 MB/s | 100 / 100 = 1.0 s | 0.01 + 1.0 + 0.01 = 1.02 s |

| T2 -> T3 on l23 | 100 MB/s | 100 / 100 = 1.0 s | 0.01 + 1.0 + 0.01 = 1.02 s |

Makespan: 1.02 s

With Proximity Interference (radius=10)¶

Since the midpoint distance (5.0) is less than the radius (10.0), both links

contend. With k=2 active contending links, the interference factor is 1/k = 0.5.

| Transfer | Effective BW | Time | Total |

|---|---|---|---|

| T0 -> T1 on l01 | 100 * 0.5 = 50 MB/s | 100 / 50 = 2.0 s | 0.01 + 2.0 + 0.01 = 2.02 s |

| T2 -> T3 on l23 | 100 * 0.5 = 50 MB/s | 100 / 50 = 2.0 s | 0.01 + 2.0 + 0.01 = 2.02 s |

Makespan: 2.02 s -- transfers take 2x longer due to interference.

YAML Configuration¶

Proximity Model¶

WiFi Models (Clique or Bianchi)¶

config:

interference: csma_bianchi # or csma_clique

rf:

tx_power_dBm: 20

freq_ghz: 5.0

path_loss_exponent: 3.0

noise_floor_dBm: -95

cca_threshold_dBm: -82

channel_width_mhz: 20

wifi_standard: "ax"

shadow_fading_sigma: 0.0

rts_cts: false

CLI Overrides¶

WiFi Model¶

The WiFi model replaces manually specified link bandwidths with physically

grounded data rates derived from RF propagation, SNR-based MCS selection,

and 802.11 MAC contention. It is used by the csma_clique and csma_bianchi

interference models.

The implementation lives in two modules:

ncsim/models/wifi.py-- RF propagation, MCS tables, conflict graph construction, Bianchi MAC efficiencyncsim/models/interference.py--CsmaCliqueInterferenceandCsmaBianchiInterferenceclasses

Path Loss and Received Power¶

ncsim uses the log-distance path loss model with a Friis free-space reference at distance d0 = 1 m.

Friis Reference Loss¶

The free-space path loss at the reference distance d0:

At 5 GHz with d0 = 1 m, this evaluates to approximately 46.4 dB. At 2.4 GHz with d0 = 1 m, approximately 40.0 dB.

Log-Distance Path Loss¶

For distance d >= d0:

Where n is the path loss exponent:

| Environment | Typical n |

|---|---|

| Free space | 2.0 |

| Indoor (open office) | 2.5 - 3.0 |

| Indoor (with walls) | 3.0 - 4.0 |

| Dense indoor | 4.0 - 5.0 |

Received Power¶

Where X_SF is the shadow fading component (0 by default, see

Shadow Fading below).

SNR¶

Signal-to-noise ratio in dB:

Where N0 is the noise floor (default -95 dBm).

MCS Rate Adaptation¶

The received SNR selects the highest MCS (Modulation and Coding Scheme) whose minimum SNR threshold is met. If the SNR falls below 5 dB (the lowest threshold), the link is considered not viable and the rate is 0.

MCS Tables (1 Spatial Stream, 20 MHz Base)¶

802.11n (HT)¶

| MCS | Modulation | Min SNR (dB) | Rate (Mbps) |

|---|---|---|---|

| 0 | BPSK 1/2 | 5 | 6.5 |

| 1 | QPSK 1/2 | 8 | 13.0 |

| 2 | QPSK 3/4 | 11 | 19.5 |

| 3 | 16-QAM 1/2 | 14 | 26.0 |

| 4 | 16-QAM 3/4 | 18 | 39.0 |

| 5 | 64-QAM 2/3 | 22 | 52.0 |

| 6 | 64-QAM 3/4 | 25 | 58.5 |

| 7 | 64-QAM 5/6 | 29 | 65.0 |

802.11ac (VHT)¶

| MCS | Modulation | Min SNR (dB) | Rate (Mbps) |

|---|---|---|---|

| 0 | BPSK 1/2 | 5 | 6.5 |

| 1 | QPSK 1/2 | 8 | 13.0 |

| 2 | QPSK 3/4 | 11 | 19.5 |

| 3 | 16-QAM 1/2 | 14 | 26.0 |

| 4 | 16-QAM 3/4 | 18 | 39.0 |

| 5 | 64-QAM 2/3 | 22 | 52.0 |

| 6 | 64-QAM 3/4 | 25 | 58.5 |

| 7 | 64-QAM 5/6 | 29 | 65.0 |

| 8 | 256-QAM 3/4 | 32 | 78.0 |

| 9 | 256-QAM 5/6 | 35 | 86.7 |

802.11ax (HE)¶

| MCS | Modulation | Min SNR (dB) | Rate (Mbps) |

|---|---|---|---|

| 0 | BPSK 1/2 | 5 | 8.6 |

| 1 | QPSK 1/2 | 8 | 17.2 |

| 2 | QPSK 3/4 | 11 | 25.8 |

| 3 | 16-QAM 1/2 | 14 | 34.4 |

| 4 | 16-QAM 3/4 | 18 | 51.6 |

| 5 | 64-QAM 2/3 | 22 | 68.8 |

| 6 | 64-QAM 3/4 | 25 | 77.4 |

| 7 | 64-QAM 5/6 | 29 | 86.0 |

| 8 | 256-QAM 3/4 | 32 | 103.2 |

| 9 | 256-QAM 5/6 | 35 | 114.7 |

| 10 | 1024-QAM 3/4 | 38 | 129.0 |

| 11 | 1024-QAM 5/6 | 41 | 143.4 |

Summary by Standard¶

| Standard | MCS Range | Peak Rate (20 MHz) | Modulation Range |

|---|---|---|---|

| 802.11n | 0--7 | 65.0 Mbps | BPSK -- 64-QAM |

| 802.11ac | 0--9 | 86.7 Mbps | BPSK -- 256-QAM |

| 802.11ax | 0--11 | 143.4 Mbps | BPSK -- 1024-QAM |

Channel Width Scaling¶

Rates scale linearly with channel width. The table values are for 20 MHz; multiply by the channel width factor:

| Channel Width | Factor |

|---|---|

| 20 MHz | 1x |

| 40 MHz | 2x |

| 80 MHz | 4x |

| 160 MHz | 8x |

For example, 802.11ax MCS 11 at 80 MHz: 143.4 * 4 = 573.6 Mbps.

Unit Conversion¶

ncsim uses MB/s internally for bandwidth. PHY rates are converted:

Conflict Graph¶

The conflict graph determines which links cannot transmit simultaneously under the 802.11 CSMA/CA protocol model.

Carrier Sensing Range¶

The maximum distance at which a transmission triggers Clear Channel Assessment (CCA):

Where theta_CCA is the CCA threshold (default -82 dBm).

Conflict Rules¶

Two links A and B conflict if carrier sensing prevents them from transmitting at the same time.

Without RTS/CTS (default): links A and B conflict if:

- Transmitter of A can sense any node of link B, OR

- Transmitter of B can sense any node of link A

"Can sense" means the distance between the two nodes is within the carrier sensing range.

With RTS/CTS (rts_cts: true): links A and B conflict if:

- Any node of link A can sense any node of link B

RTS/CTS extends the conflict zone to protect receivers, which reduces hidden terminal problems but increases contention.

graph TB

subgraph "Without RTS/CTS"

direction LR

txA1["TX(A)"] -->|"sense?"| txB1["TX(B)"]

txA1 -->|"sense?"| rxB1["RX(B)"]

txB1 -->|"sense?"| txA1b["TX(A)"]

txB1 -->|"sense?"| rxA1["RX(A)"]

end

subgraph "With RTS/CTS"

direction LR

txA2["TX(A)"] -->|"sense?"| txB2["TX(B)"]

txA2 -->|"sense?"| rxB2["RX(B)"]

rxA2["RX(A)"] -->|"sense?"| txB2b["TX(B)"]

rxA2 -->|"sense?"| rxB2b["RX(B)"]

endMax Clique Computation¶

For each link, ncsim computes the maximum clique size -- the largest set of mutually conflicting links that includes the given link.

- Exact (Bron-Kerbosch with pivoting): used when the network has 50 or fewer links. Finds all maximal cliques and records the largest one containing each link.

- Greedy approximation: used for larger networks. Builds a clique greedily starting from each link by adding the most-connected candidate that is adjacent to all current clique members.

Bianchi MAC Efficiency¶

Bianchi's saturation throughput model computes the MAC-layer efficiency

eta(n) for n contending stations sharing the channel under 802.11 DCF.

Coupled Equations¶

The model solves for the transmission probability tau and collision

probability p via fixed-point iteration:

Where:

- W = 16 (CWmin, minimum contention window for 802.11)

- m = 6 (maximum backoff stage; CWmax = W * 2^m = 1024)

Efficiency Values¶

From the converged tau and p, the model computes idle, success, and

collision probabilities per slot, then derives the fraction of channel time

carrying successful payload:

| n (stations) | eta(n) | Per-station share eta(n)/n |

|---|---|---|

| 1 | 1.000 | 1.000 |

| 2 | ~0.88 | ~0.44 |

| 5 | ~0.72 | ~0.14 |

| 10 | ~0.59 | ~0.059 |

| 20 | ~0.47 | ~0.024 |

Properties:

eta(1) = 1.0-- a single station has no contention overhead.eta(n)is monotonically decreasing but always positive.- The per-station share

eta(n)/ndecreases faster than1/ndue to collision overhead.

ncsim precomputes a lookup table for n = 1 to 100 at startup.

CSMA Clique Model¶

The static WiFi interference model. Contention is computed once at setup and baked into each link's bandwidth.

Bandwidth Assignment¶

Where omega(l) is the maximum clique size containing link l.

Interference Factor¶

Always 1.0. Since contention is already reflected in the bandwidth, the interference model does not apply any additional reduction during simulation.

When To Use¶

csma_clique is appropriate when you want WiFi-aware bandwidth estimation

without the computational cost of dynamic recalculation. It provides a

conservative worst-case bound: the bandwidth assumes all links in the

largest clique are always active, even if only a subset actually transmits

at any given time.

CSMA Bianchi Model¶

The dynamic WiFi interference model. It correctly separates two interference mechanisms and recalculates the factor whenever transfers start or complete.

Mechanism 1: Contention Domain Time-Sharing¶

Links that are neighbors in the conflict graph operate under CSMA/CA and cannot transmit simultaneously. Each of n contending links gets:

No SINR degradation occurs from these links because CSMA prevents concurrent transmission.

Mechanism 2: Hidden Terminal SINR Degradation¶

Active links that are not in the conflict graph may transmit simultaneously, causing interference at the receiver. The SINR is computed in the linear domain:

Where P_interferers includes only hidden terminal transmitter powers as

received at the evaluated link's receiver node. The SINR determines a

(potentially lower) MCS rate R_SINR.

Combined Factor¶

The factor is clamped to the range [0.01, 1.0].

Recalculation¶

When any transfer starts or completes, all other active links have their factors recalculated. This ensures both contention domain changes and hidden terminal changes are captured symmetrically.

flowchart TD

A["Transfer starts/completes on link L"] --> B["Identify active links"]

B --> C["For each active link K != L"]

C --> D["Classify neighbors:<br/>contending vs. hidden"]

D --> E["Compute contention_factor = eta(n)/n"]

D --> F["Compute SINR from hidden terminals"]

F --> G["Select MCS from SINR -> R_SINR"]

E --> H["factor = (R_SINR / R_base) * (eta(n) / n)"]

G --> H

H --> I["Recalculate transfer completion time"]Key design decision

Contending links affect throughput via time-sharing (Bianchi), not via SINR. Only hidden terminals cause SINR degradation. Conflating the two (e.g., including contending links in the SINR calculation) would double-count their impact and produce unrealistically low throughput.

Shadow Fading¶

Optional log-normal shadow fading adds randomness to path loss. In the dB domain, fading values are drawn from a Gaussian distribution N(0, sigma).

- Per-node-pair: each pair of nodes gets its own fading value.

- Symmetric: fading(A, B) = fading(B, A).

- Deterministic from seed: the same seed always produces the same fading map, ensuring reproducibility.

- Configured via

shadow_fading_sigma(default 0.0, meaning no fading).

The fading value is subtracted from received power:

Where X_SF ~ N(0, sigma) for the specific node pair.

RF Configuration Parameters¶

All RF parameters are specified in the rf: section of the scenario YAML.

Several can also be overridden via CLI flags.

| YAML Key | CLI Flag | Unit | Default | Description |

|---|---|---|---|---|

tx_power_dBm |

--tx-power |

dBm | 20.0 | Transmit power (typical AP: 15--23 dBm) |

freq_ghz |

--freq |

GHz | 5.0 | Carrier frequency (2.4 or 5.0) |

path_loss_exponent |

--path-loss-exponent |

-- | 3.0 | Path loss exponent n (2.0 = free space, 3--4 = indoor) |

noise_floor_dBm |

-- | dBm | -95.0 | Effective noise floor including receiver noise figure |

cca_threshold_dBm |

-- | dBm | -82.0 | CCA signal detect threshold |

channel_width_mhz |

-- | MHz | 20 | Channel width (20, 40, 80, 160) |

wifi_standard |

--wifi-standard |

-- | ax |

MCS table selection (n, ac, ax) |

shadow_fading_sigma |

-- | dB | 0.0 | Std dev of log-normal shadow fading (0 = none) |

rts_cts |

--rts-cts |

-- | false |

Enable RTS/CTS (extends conflict zone) |

Full YAML Example¶

config:

interference: csma_bianchi

rf:

tx_power_dBm: 20

freq_ghz: 5.0

path_loss_exponent: 3.0

noise_floor_dBm: -95

cca_threshold_dBm: -82

channel_width_mhz: 20

wifi_standard: "ax"

shadow_fading_sigma: 0.0

rts_cts: false

Omitting link bandwidth

When using csma_clique or csma_bianchi, link bandwidth is derived

from RF parameters and node positions. You can omit the bandwidth key

from link definitions -- ncsim will compute it automatically. If you do

specify a bandwidth, it will be kept as-is (not overwritten by the WiFi

model), which is useful for mixing wired and wireless links in the same

topology.

CLI Override Example¶

Scenarios

YAML Reference¶

This page documents every field available in an ncsim scenario YAML file, with a complete annotated example and a field-by-field reference table.

Complete Annotated Example¶

The following YAML shows every supported field with inline comments explaining its purpose:

scenario:

name: "My Experiment"

network:

nodes:

- id: n0

compute_capacity: 100 # compute units per second

position: {x: 0, y: 0} # meters (for interference and viz)

- id: n1

compute_capacity: 50

position: {x: 10, y: 0}

links:

- id: l01

from: n0 # directional: n0 -> n1

to: n1

bandwidth: 100 # MB/s

latency: 0.001 # seconds

dags:

- id: dag_1

inject_at: 0.0 # simulation time to inject

tasks:

- id: T0

compute_cost: 100 # compute units (runtime = cost / capacity)

pinned_to: n0 # optional: force task to this node

- id: T1

compute_cost: 200

edges:

- from: T0

to: T1

data_size: 50 # MB

config:

scheduler: heft # heft | cpop | round_robin

seed: 42

routing: direct # direct | widest_path | shortest_path

interference: proximity # none | proximity | csma_clique | csma_bianchi

interference_radius: 15.0 # meters (proximity model only)

rf: # WiFi models only

tx_power_dBm: 20

freq_ghz: 5.0

path_loss_exponent: 3.0

noise_floor_dBm: -95

cca_threshold_dBm: -82

channel_width_mhz: 20

wifi_standard: ax

shadow_fading_sigma: 0

rts_cts: false

Field-by-Field Reference¶

Scenario Root¶

| Field | Type | Required | Default | Description |

|---|---|---|---|---|

scenario.name |

string | No | File stem | Human-readable name for the scenario. Used in CLI output and result metadata. |

Nodes (scenario.network.nodes[])¶

| Field | Type | Required | Default | Description |

|---|---|---|---|---|

nodes[].id |

string | Yes | -- | Unique identifier for the node. Referenced by links, pinned_to, and routing. |

nodes[].compute_capacity |

float | Yes | -- | Processing speed in compute units per second. Task runtime = compute_cost / compute_capacity. |

nodes[].position |

object | No | {x: 0, y: 0} |

2D position in meters. Used by interference models (proximity, CSMA) and the visualization. Contains x and y float fields. |

Links (scenario.network.links[])¶

| Field | Type | Required | Default | Description |

|---|---|---|---|---|

links[].id |

string | Yes | -- | Unique identifier for the link. |

links[].from |

string | Yes | -- | Source node ID. Links are directional: data flows from from to to. |

links[].to |

string | Yes | -- | Destination node ID. |

links[].bandwidth |

float | No | Derived from RF | Link capacity in MB/s. When using csma_clique or csma_bianchi, links without explicit bandwidth derive their rate from RF parameters. Links WITH explicit bandwidth keep their stated value (useful for mixed wired/wireless topologies). |

links[].latency |

float | No | 0.0 |

Fixed propagation delay in seconds, added to every transfer on this link. |

DAGs (scenario.dags[])¶

| Field | Type | Required | Default | Description |

|---|---|---|---|---|

dags[].id |

string | Yes | -- | Unique identifier for the DAG. |

dags[].inject_at |

float | No | 0.0 |

Simulation time (in seconds) at which this DAG is injected into the system. |

Tasks (scenario.dags[].tasks[])¶

| Field | Type | Required | Default | Description |

|---|---|---|---|---|

tasks[].id |

string | Yes | -- | Unique identifier for the task within its DAG. |

tasks[].compute_cost |

float | Yes | -- | Total compute work in compute units. Runtime on a node = compute_cost / node.compute_capacity. |